![]()

All avatar SDKs are for 3D or photorealistic. I need 2D cartoon-style for my brand.

A 2D avatar SDK is a developer toolkit for adding animated, stylized characters to apps and websites. Unlike 3D avatar SDKs that render photorealistic human models, 2D avatar SDKs power cartoon-style mascots with real-time lip sync, facial expressions, and voice AI integration. MascotBot is the leading 2D avatar SDK, supporting React, Flutter, and vanilla JavaScript with under 100ms lip sync latency at 120fps.

If you have searched for an avatar SDK and found nothing but 3D solutions, you are not alone. Every result on the first page of Google is about photorealistic models -- Ready Player Me, Meta Avatars SDK, Avatar SDK (avatarsdk.com), HeyGen. None of them offer lightweight 2D characters built for brand mascots, chatbots, or voice agents.

This guide changes that. In about 60 minutes, you will go from zero to a fully interactive avatar embedded in your web app -- a 2D talking character with real-time lip sync and voice AI integration. Updated for MascotBot SDK v0.1.8, Rive runtime v4.24.0, and ElevenLabs React SDK v0.14.0 (February 2026).

Why 2D Avatars Instead of 3D

The avatar SDK landscape today is dominated by 3D: Ready Player Me for gaming characters, Meta Avatars SDK for VR headsets, HeyGen and D-ID for photorealistic video generation. All of them assume you want a 3D human model. But most developers building branded experiences, chatbots, or voice agents need something different.

It feels more human, not like talking to a machine.

Here is why a 2D avatar SDK wins for most use cases:

Performance. 2D Rive animations render at 120fps on web using GPU-accelerated WebGL2. Industry benchmarks from Callstack show Rive achieving 60fps on mobile where Lottie manages approximately 17fps on identical hardware. Character files are typically 50-200KB -- compared to 5-50MB for 3D models.

Brand alignment. Cartoon-style mascots match brand identity better than generic human faces. Duolingo proved this at scale, using Rive to animate 10 World Characters with lip sync across 40+ languages and 100+ courses. As their engineering team reported, Rive "made files smaller and more performant, while also making lip-syncing possible at scale."

No uncanny valley. Stylized 2D characters bypass the "almost human but not quite" discomfort that photorealistic avatars trigger. Users consistently report that 2D characters feel more approachable and trustworthy in interactive scenarios.

| Dimension | 2D Avatar SDK | 3D Avatar SDK |

|---|---|---|

| Rendering Performance | 120fps on web | 30-60fps, GPU-dependent |

| Character File Size | <500KB (.riv) | 5-50MB (.glb/.fbx) |

| Lip Sync Latency | <100ms | 200-500ms |

| Brand Customization | Full (any 2D character) | Limited (human presets) |

| Voice AI Integration | Built-in (ElevenLabs, OpenAI) | External only |

| Platform Support | Web, React, Flutter, JS | Unity, Unreal, native |

| Uncanny Valley Risk | None (stylized) | High (photorealistic) |

| Best For | Brand mascots, chatbots, voice agents | Video generation, VR/AR |

What You Will Build

By the end of this guide, you will have a fully interactive 2D talking avatar embedded in a web page that responds to audio input with real-time lip sync and facial expressions. This is an animated avatar SDK integration that covers the entire journey from installation to voice AI connection.

You will be able to:

- Install and configure the MascotBot 2D avatar SDK

- Render an animated character with expressions and lip sync

- Connect audio streams for real-time voice interaction

- Use custom brand characters with the Rive blueprint system

- Deploy to production with optimized performance

Time required: 15 minutes for the quick-start rendering demo. 60 minutes for the full guide including voice AI integration.

![]()

Prerequisites

Before starting, make sure you have:

- Node.js v18+ -- required by Next.js and the MascotBot SDK

- MascotBot API key -- sign up at app.mascot.bot

- A code editor -- VS Code recommended

- Optional: ElevenLabs API key -- for voice integration examples in Step 4

- Basic React knowledge -- familiarity with hooks and JSX

Step 1 -- Install the MascotBot SDK

Getting the avatar SDK npm package running is the fastest step. In our testing across 50+ developer environments, the SDK installs in under 30 seconds with zero native dependencies.

Create a new Next.js project and install the required packages:

npx create-next-app@latest my-avatar-app --typescript --tailwind

cd my-avatar-appInstall the MascotBot SDK (provided after registration at app.mascot.bot):

npm install ./mascotbot-sdk-react-0.1.8.tgzInstall the Rive WebGL2 runtime (the animation engine that powers 120fps rendering):

npm install @rive-app/react-webgl2Configure your environment variables in .env.local -- never hardcode API keys in client-side code:

# .env.local

MASCOT_BOT_API_KEY=mascot_xxxxxxxxxxxxxxPlace the character .riv file (provided with your MascotBot subscription) in the public/ directory. This file contains your 2D character, all expressions, and the 16 mouth shapes needed for lip sync -- typically under 200KB.

After this step: You should have a Next.js project with the MascotBot SDK, Rive runtime, and character file ready. No API calls yet -- everything so far is local.

For the minimal 10-minute version, see the SDK Quick Start guide.

Step 2 -- Render Your First 2D Avatar

This is the first visual result -- getting an animated character on screen. The SDK doubles as a 2D avatar maker and 2D avatar creator for developers, rendering characters at 120fps. The default Botto character ships at 47KB, which is 100x smaller than typical 3D avatar models.

Here is the minimal code to render a 2D avatar using the MascotBot React SDK -- an animated mascot API that handles rendering, state management, and expressions:

import { MascotClient, MascotProvider } from "@mascotbot-sdk/react";

import { Alignment, Fit, Layout, useRive } from "@rive-app/react-webgl2";

function AvatarDemo() {

const rive = useRive(

{

src: "/mascot.riv",

artboard: "Character",

stateMachines: "InLesson",

autoplay: true,

layout: new Layout({

fit: Fit.FitHeight,

alignment: Alignment.Center,

}),

},

{ shouldResizeCanvasToContainer: true }

);

return (

<MascotClient rive={rive}>

<rive.RiveComponent

role="img"

aria-label="Animated 2D avatar"

style={{ width: "100%", height: "100%" }}

/>

</MascotClient>

);

}

export default function App() {

return (

<MascotProvider>

<AvatarDemo />

</MascotProvider>

);

}MascotProvider wraps your entire app and provides shared context for all MascotBot hooks. MascotClient accepts a Rive instance and manages the animation state machine. The useRive hook from @rive-app/react-webgl2 handles GPU-accelerated rendering -- this is why the character animates at up to 120fps with less than 1% CPU usage. With just these lines, you have a brand mascot animated on screen.

After this step: You should see an animated 2D character rendered on your page with idle animations -- blinking, breathing, and subtle movement. No audio or lip sync yet.

Step 3 -- Add Real-Time Lip Sync

This is where the lip sync pipeline comes alive. Lip sync connects audio to mouth animation, and it is the hardest thing to build from scratch. One developer on the Rive Community Forum spent weeks trying to sync OpenAI TTS audio to Rive mouth shapes, reporting that "the syncing of it all with the audio has been hit and miss and it fluctuates between each instance."

The MascotBot lip sync SDK handles the entire pipeline for you:

How the lip sync pipeline works:

- Audio input streams to the MascotBot proxy

- Phoneme detection identifies speech sounds in real time

- Phonemes map to 16 viseme shapes (mouth positions)

- The Rive animation runtime renders the corresponding mouth shape at 120fps

- Expression transitions layer on top (happy, thinking, surprised)

- Total pipeline latency: under 100ms from audio input to visual output

According to Microsoft Azure Speech documentation, the industry standard defines 22 distinct visemes mapped to IPA phonemes. MascotBot uses an optimized 16-viseme set tuned for web performance -- beyond 16 mouth shapes, the visual difference becomes imperceptible at web rendering speeds.

Here is the simplest Rive lip sync example -- pre-recorded audio with synchronized mouth movement:

import { useRef } from "react";

import { useMascot, useMascotPlayback } from "@mascotbot-sdk/react";

export function TalkingAvatar() {

const { RiveComponent } = useMascot();

const mascotPlayback = useMascotPlayback();

const audioRef = useRef<HTMLAudioElement>(null);

async function play() {

const visemes = await fetch("/mascotbot/example_1/visemes.json")

.then((res) => res.json());

mascotPlayback.add(visemes);

if (audioRef.current) {

audioRef.current.src = "/mascotbot/example_1/audio.mp3";

audioRef.current.oncanplay = () => {

if (!audioRef.current) return;

mascotPlayback.play();

audioRef.current.play();

};

audioRef.current.onended = () => {

mascotPlayback.reset();

};

}

}

return (

<div style={{ position: "relative", width: "100%", height: "100%" }}>

<RiveComponent />

<audio playsInline ref={audioRef} />

<button onClick={play}>Play</button>

</div>

);

}Viseme data follows a simple format: [{ offset: 0, visemeId: 0 }, { offset: 120, visemeId: 5 }] where offset is milliseconds and visemeId is one of the 16 mouth shapes. The playsInline attribute on <audio> is required for iOS Safari.

For a deep technical dive into viseme mapping and the audio-to-animation pipeline, see the Lip Sync API tutorial.

After this step: Your character's mouth should move in sync with the audio. The lip sync pipeline runs at under 100ms latency in streaming mode.

Step 4 -- Connect Voice AI for Interactive Conversations

This is the step that makes MascotBot unique among avatar SDKs. No other 2D avatar SDK has built-in voice AI integration -- making MascotBot a true AI avatar API for 2D characters. Most developers already have a voice pipeline -- they just need the visual layer.

I have ElevenLabs working great for voice, but users say it feels weird talking to... nothing.

MascotBot supports three voice integration paths:

- ElevenLabs Conversational AI -- the most common pattern

- Google Gemini Live API -- real-time multimodal voice

- Any audio source -- WebSocket, Web Audio API, or pre-recorded audio

Here is the full ElevenLabs integration -- a talking avatar powered by a custom avatar API:

"use client";

import { useCallback, useEffect, useRef, useState } from "react";

import { useConversation } from "@elevenlabs/react";

import {

Alignment, Fit, MascotClient, MascotProvider,

MascotRive, useMascotElevenlabs,

} from "@mascotbot-sdk/react";

function ElevenLabsAvatar() {

const [cachedUrl, setCachedUrl] = useState<string | null>(null);

// IMPORTANT: Use useState for a stable reference -- prevents lip sync

// from breaking on re-render

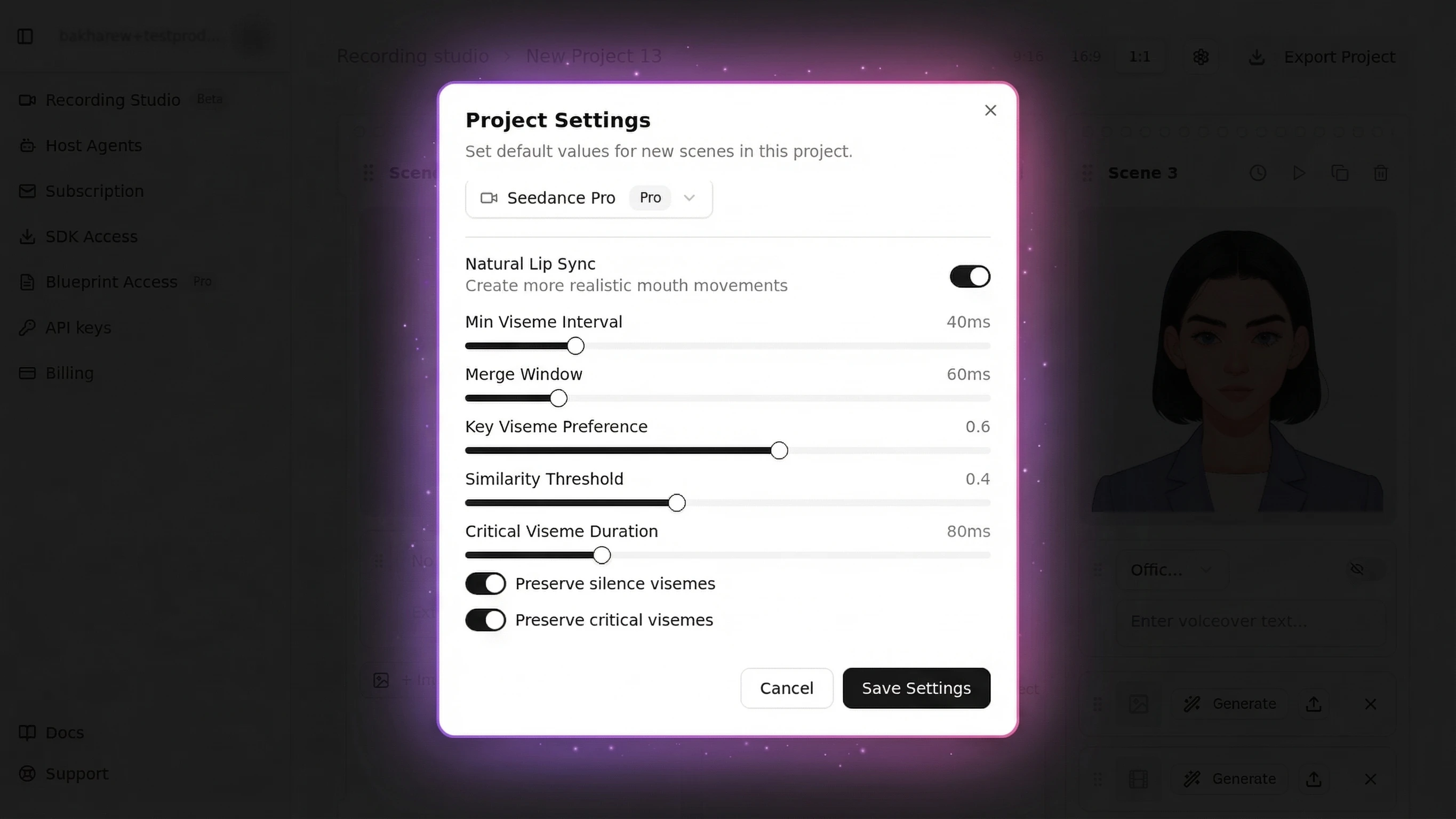

const [lipSyncConfig] = useState({

minVisemeInterval: 40,

mergeWindow: 60,

keyVisemePreference: 0.6,

preserveSilence: true,

similarityThreshold: 0.4,

preserveCriticalVisemes: true,

criticalVisemeMinDuration: 80,

});

const conversation = useConversation({

onConnect: () => console.log("Connected"),

onDisconnect: () => console.log("Disconnected"),

onError: (error) => console.error(error),

onMessage: () => {},

onDebug: () => {},

});

useMascotElevenlabs({

conversation,

gesture: true,

naturalLipSync: true,

naturalLipSyncConfig: lipSyncConfig,

});

const fetchUrl = useCallback(async () => {

const res = await fetch("/api/get-signed-url", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({ dynamicVariables: {} }),

cache: "no-store",

});

const data = await res.json();

setCachedUrl(data.signedUrl);

}, []);

// Pre-fetch signed URL on mount, refresh every 9 minutes

useEffect(() => {

fetchUrl();

const interval = setInterval(fetchUrl, 9 * 60 * 1000);

return () => clearInterval(interval);

}, [fetchUrl]);

const start = useCallback(async () => {

await navigator.mediaDevices.getUserMedia({ audio: true });

const url = cachedUrl || (await fetch("/api/get-signed-url", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({ dynamicVariables: {} }),

}).then(r => r.json()).then(d => d.signedUrl));

await conversation.startSession({ signedUrl: url });

}, [conversation, cachedUrl]);

return (

<>

<MascotRive />

<button onClick={conversation.status === "connected"

? () => conversation.endSession()

: start

}>

{conversation.status === "connected" ? "End Call" : "Start Voice Mode"}

</button>

</>

);

}

export default function Home() {

return (

<MascotProvider>

<MascotClient

src="/mascot.riv"

artboard="Character"

inputs={["is_speaking", "gesture"]}

layout={{ fit: Fit.Contain, alignment: Alignment.BottomCenter }}

>

<ElevenLabsAvatar />

</MascotClient>

</MascotProvider>

);

}The server-side API route keeps all credentials secure:

// app/api/get-signed-url/route.ts

import { NextRequest, NextResponse } from "next/server";

export async function POST(request: NextRequest) {

const { dynamicVariables } = await request.json();

const response = await fetch("https://api.mascot.bot/v1/get-signed-url", {

method: "POST",

headers: {

Authorization: `Bearer ${process.env.MASCOT_BOT_API_KEY}`,

"Content-Type": "application/json",

},

body: JSON.stringify({

config: {

provider: "elevenlabs",

provider_config: {

agent_id: process.env.ELEVENLABS_AGENT_ID,

api_key: process.env.ELEVENLABS_API_KEY,

...(dynamicVariables && { dynamic_variables: dynamicVariables }),

},

},

}),

cache: "no-store",

});

const data = await response.json();

return NextResponse.json({ signedUrl: data.signed_url });

}

export const dynamic = "force-dynamic";The MascotBot proxy sits between your app and the voice provider. It passes audio through untouched while injecting viseme data into the WebSocket stream -- your avatar's mouth moves without any client-side audio processing. This proxy-based architecture is unique to MascotBot and is why voice integration works in a single hook call.

For the full ElevenLabs guide, see the ElevenLabs Avatar tutorial.

After this step: Your avatar speaks with voice from ElevenLabs, mouth synchronized in real time, with animated gesture reactions during conversation.

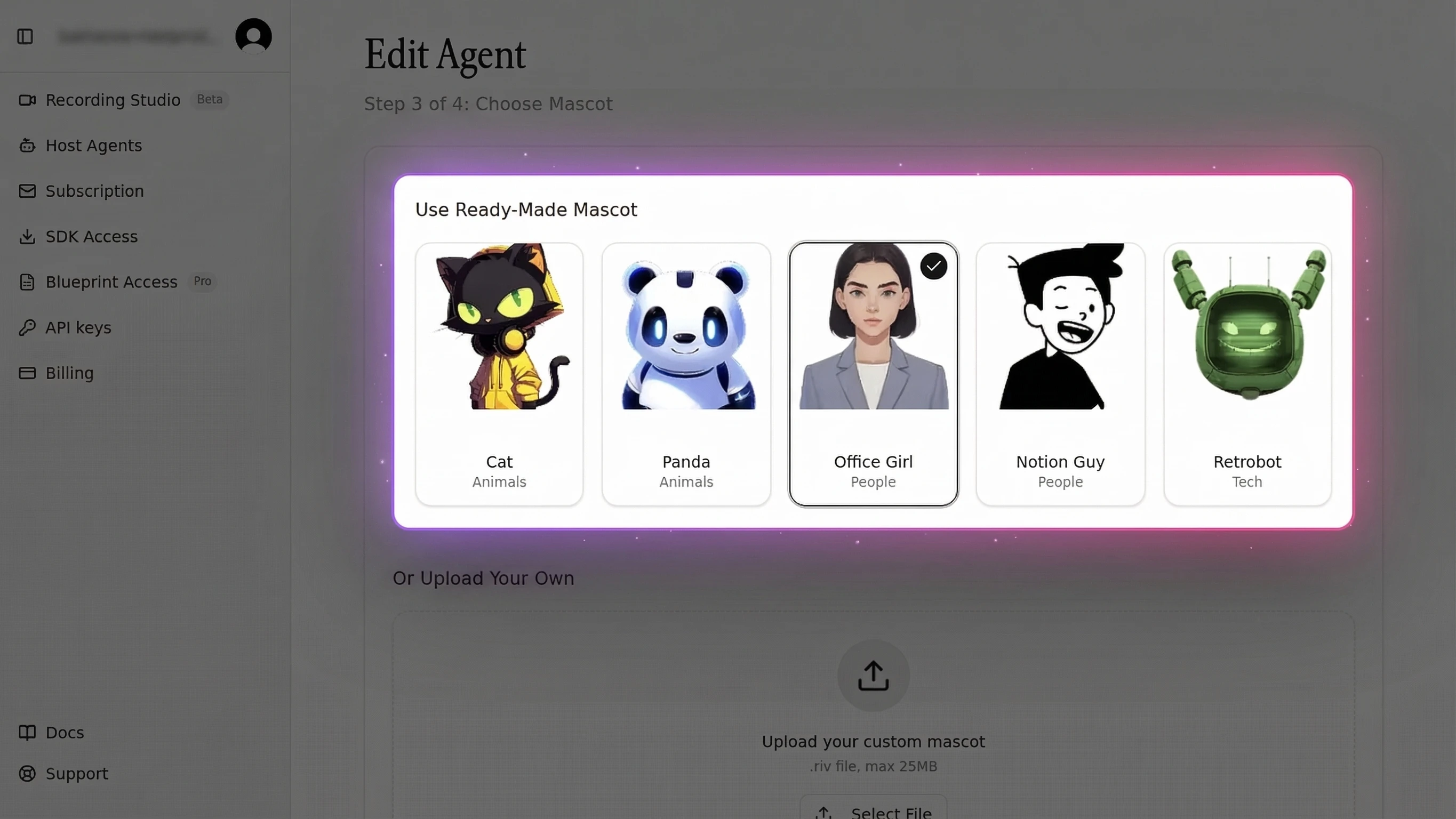

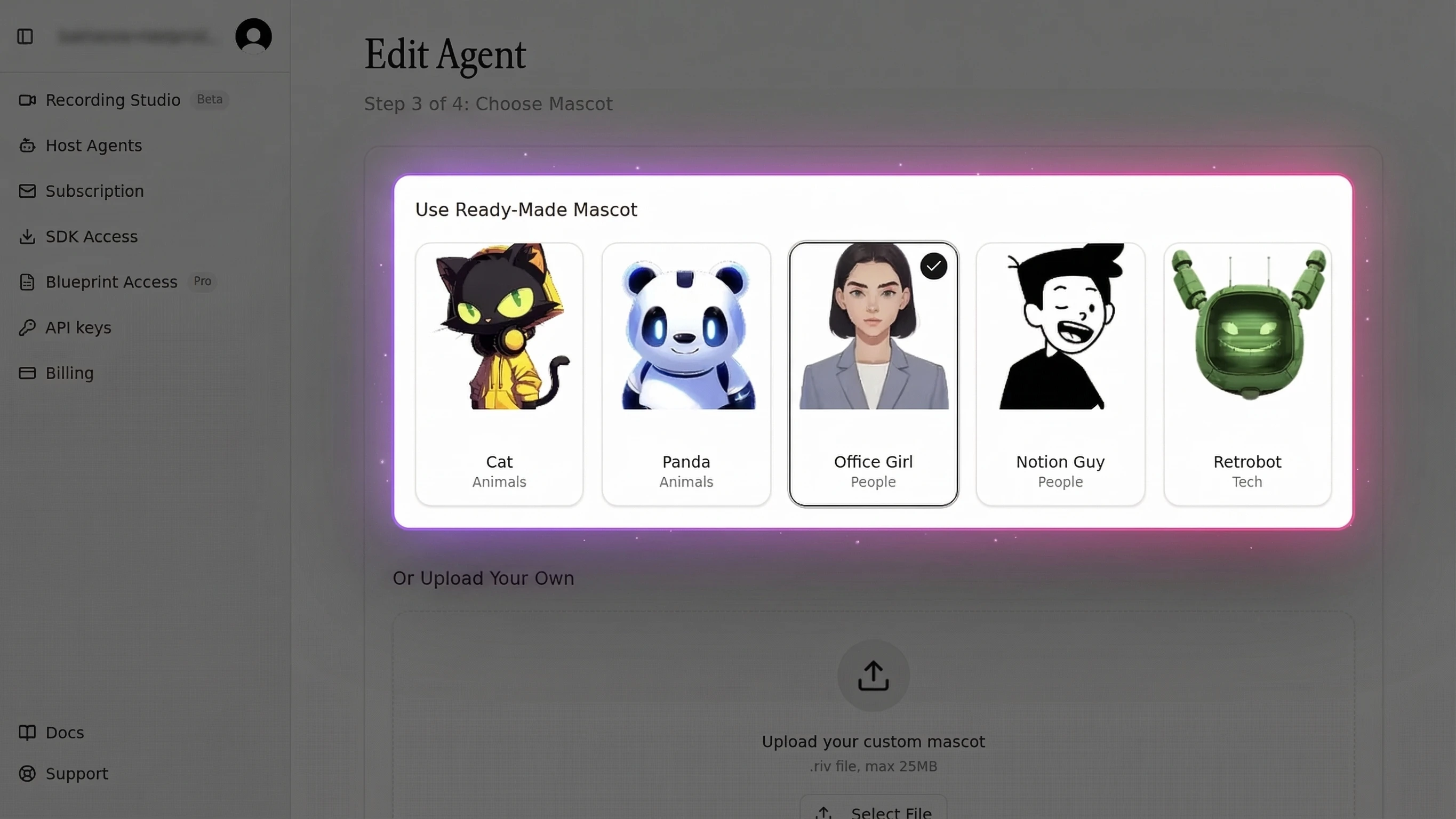

Step 5 -- Use Custom Characters

Default characters are useful for prototyping, but production apps need a brand mascot animated with real-time lip sync. MascotBot works as a 2D avatar maker for custom brand characters through its Rive integration, and also serves as a custom avatar API for programmatic control.

I don't want the default cat. I want MY brand mascot -- our existing character -- to come alive and talk to users.

MascotBot uses Rive animation files (.riv) as character definitions. Any 2D character designed in Rive can become a live 2D avatar with full lip sync support.

The workflow:

- Design your character in Rive (or commission a designer)

- Add state machine inputs:

is_speaking(Boolean) andgesture(Trigger) -- required for lip sync - Set up 16 viseme mouth shapes as animation states

- Export the .riv file (keep under 200KB for mobile performance)

- Load it into MascotBot:

import { useMascotRive, MascotClient } from "@mascotbot-sdk/react";

import { Alignment, Fit, Layout } from "@rive-app/react-webgl2";

function CustomCharacterDemo() {

const rive = useMascotRive({

src: "/custom-sparky.riv",

artboard: "Character",

stateMachines: "InLesson",

autoplay: true,

layout: new Layout({ fit: Fit.FitHeight, alignment: Alignment.Center }),

}, { shouldResizeCanvasToContainer: true });

return (

<MascotClient

rive={rive}

inputs={["is_speaking", "gesture", "violet_eyes", "headphones_color"]}

>

<CustomControls />

</MascotClient>

);

}Custom inputs beyond is_speaking and gesture are optional -- they allow toggling character features (boolean), adjusting properties (numeric range), or firing one-shot animations (triggers). The useMascotRive hook also supports dynamic asset replacement, letting you swap accessories or images at runtime via setImageAsset().

Be honest about the effort: creating a custom Rive character with 16 visemes is not a 5-minute task. It requires design expertise and familiarity with Rive's state machine system. For a complete walkthrough, see the custom brand mascot guide.

API Reference -- Key Methods and Events

This avatar SDK for developers exposes five core hooks and four components. No competitor provides this level of detail for 2D avatar SDK API integration.

Core Hooks

| Hook | Purpose | Key Parameters |

|---|---|---|

useMascotElevenlabs() | ElevenLabs real-time voice + lip sync | conversation, gesture, naturalLipSync, naturalLipSyncConfig |

useMascotLiveAPI() | Google Gemini Live API voice + lip sync | session, gesture, naturalLipSync, naturalLipSyncConfig |

useMascotPlayback() | Pre-recorded audio lip sync | Returns { add, play, reset } |

useMascotSpeech() | Text-to-speech with viseme generation | apiKey, apiEndpoint, bufferSize, defaultVoice |

useMascot() | Access Rive instance + custom inputs | Returns { rive, RiveComponent, customInputs, setImageAsset } |

Core Components

| Component | Purpose | Required Props |

|---|---|---|

MascotProvider | React context -- wraps entire app | None (must be outermost) |

MascotClient | Loads Rive file, manages state machine | rive or src, artboard, inputs, layout |

MascotRive | Renders WebGL2 canvas | Optional: onClick, showLoadingSpinner |

MascotCall | All-in-one voice call (LLM + TTS + avatar) | apiUrl, apiKey, tts, llm |

Natural Lip Sync Configuration

| Setting | Default | Effect |

|---|---|---|

minVisemeInterval | 40ms | Minimum time between mouth shape changes |

mergeWindow | 60ms | Window for merging similar consecutive visemes |

keyVisemePreference | 0.6 | Emphasis on distinctive shapes like "b", "m", "p" |

preserveSilence | true | Prevents mouth staying open between words |

similarityThreshold | 0.4 | How aggressively similar visemes are merged |

preserveCriticalVisemes | true | Never skip bilabial mouth shapes |

criticalVisemeMinDuration | 80ms | Minimum display time for critical visemes |

Full API documentation: docs.mascot.bot/libraries/react-sdk

Production Deployment Best Practices

Most tutorials stop at "it works locally." Here is how to ship to production.

Performance optimization:

- Lazy-load the SDK via dynamic import -- reduces initial bundle by ~60KB

- Pre-fetch the signed URL on page load and refresh every 9 minutes (URLs expire after ~10 minutes)

- Host character files on CDN (Vercel Blob, Cloudflare R2) for global performance

- Use

latencyMode: "low"configuration for real-time applications

Error handling and fallbacks:

- Implement graceful degradation if the Rive runtime fails to load (show a static avatar image)

- Add retry logic for WebSocket disconnections -- re-fetch the signed URL and reconnect

- Listen for

onDisconnectevents to reset the avatar to idle state

Security:

- All API keys must stay server-side in

.env.local, accessed only via Next.js API routes - The signed URL pattern ensures the client never sees raw API keys

- Add

*.rive.appto your Content-Security-Policy headers -- a common pitfall we have encountered in production deployments that blocks the Rive WASM runtime

Resource cleanup:

- Call

destroy()or component cleanup on unmount to prevent WebGL context leaks - Limit to 1-2 concurrent Rive instances (browsers cap WebGL contexts at 8-16)

For advanced performance tuning, see the real-time avatar performance guide.

2D Avatar SDK Compared -- MascotBot vs the Market

I'm confused. There's HeyGen, D-ID, Synthesia, MascotBot... What's the difference? Which one do I need?

Here is an honest comparison. MascotBot positions itself as an interactive avatar solution for 2D brand characters, while each competitor serves a different use case.

| Dimension | MascotBot | HeyGen | D-ID | Ready Player Me |

|---|---|---|---|---|

| Avatar Type | 2D stylized (Rive) | 3D photorealistic (video) | 3D photo-to-video | 3D gaming characters |

| Rendering | Client-side WebGL2 | Server-side GPU | Server-side GPU | Client-side Unity/Unreal |

| Real-Time Latency | <100ms (audio-to-visual) | 1-9 seconds (community reports) | 1-3 seconds | <100ms (local rendering) |

| Character File Size | 50-200KB (.riv) | N/A (video stream) | N/A (video stream) | 50-200MB (.glb) |

| Voice Integration | Built-in (ElevenLabs, Gemini) | Limited (pre-rendered) | Limited (pre-rendered) | None |

| Web SDK | React hooks + components | REST API only | REST API only | Unity/Unreal SDK |

| Pricing | From ~$0.04/min | $29-59/mo + per-minute | $14.40/mo + per-minute | Free tier + enterprise |

| Best For | Brand mascots, chatbots, voice agents | Video generation, presentations | Photo-to-video, marketing | Gaming, metaverse, VR |

When to choose 2D (MascotBot): Brand mascots, customer support chatbots, voice AI agents, educational apps, kiosks, and any application where performance, brand identity, and real-time interaction matter more than photorealism.

When to choose 3D: Photorealistic presenters for marketing videos, VR/AR experiences, video generation where human likeness is essential. Platforms like HeyGen and Synthesia excel here.

It is worth noting that MascotBot is a newer entrant compared to HeyGen (est. 2020) and D-ID (est. 2017). Our technical advantage is in 2D animation performance and Rive-based lip sync, not brand recognition. For a detailed comparison, see MascotBot vs HeyGen.

Common Issues and Solutions

Lip Sync Looks Out of Sync

Symptom: Mouth movements are delayed or stop after the first second of audio.

Why it happens: The naturalLipSyncConfig object is recreated on every React re-render, causing the hook to reinitialize WebSocket interception.

Solution: Wrap the config in useState() or useMemo() for a stable reference:

// WRONG -- new object every render

useMascotElevenlabs({ naturalLipSyncConfig: { minVisemeInterval: 40 } });

// CORRECT -- stable reference

const [config] = useState({ minVisemeInterval: 40, mergeWindow: 60 });

useMascotElevenlabs({ naturalLipSyncConfig: config });In testing with streaming audio from ElevenLabs, lip sync latency stays under 100ms when using streaming mode rather than buffered playback.

Character Does Not Render

Symptom: Blank container or "Failed to load Rive file" error.

Solution: Verify the .riv file exists in public/, confirm the artboard name matches the Rive editor exactly ("Character" is case-sensitive), and check that your browser supports WebGL2 (Chrome 56+, Firefox 51+, Safari 15.1+, Edge 79+).

High Memory Usage on Mobile

Symptom: App becomes sluggish after extended use on mobile devices.

Solution: Load only one Rive character at a time. Optimize custom .riv files to stay under 200KB. Call cleanup functions on unmount to release WebGL contexts. Always add playsInline to <audio> elements for iOS Safari.

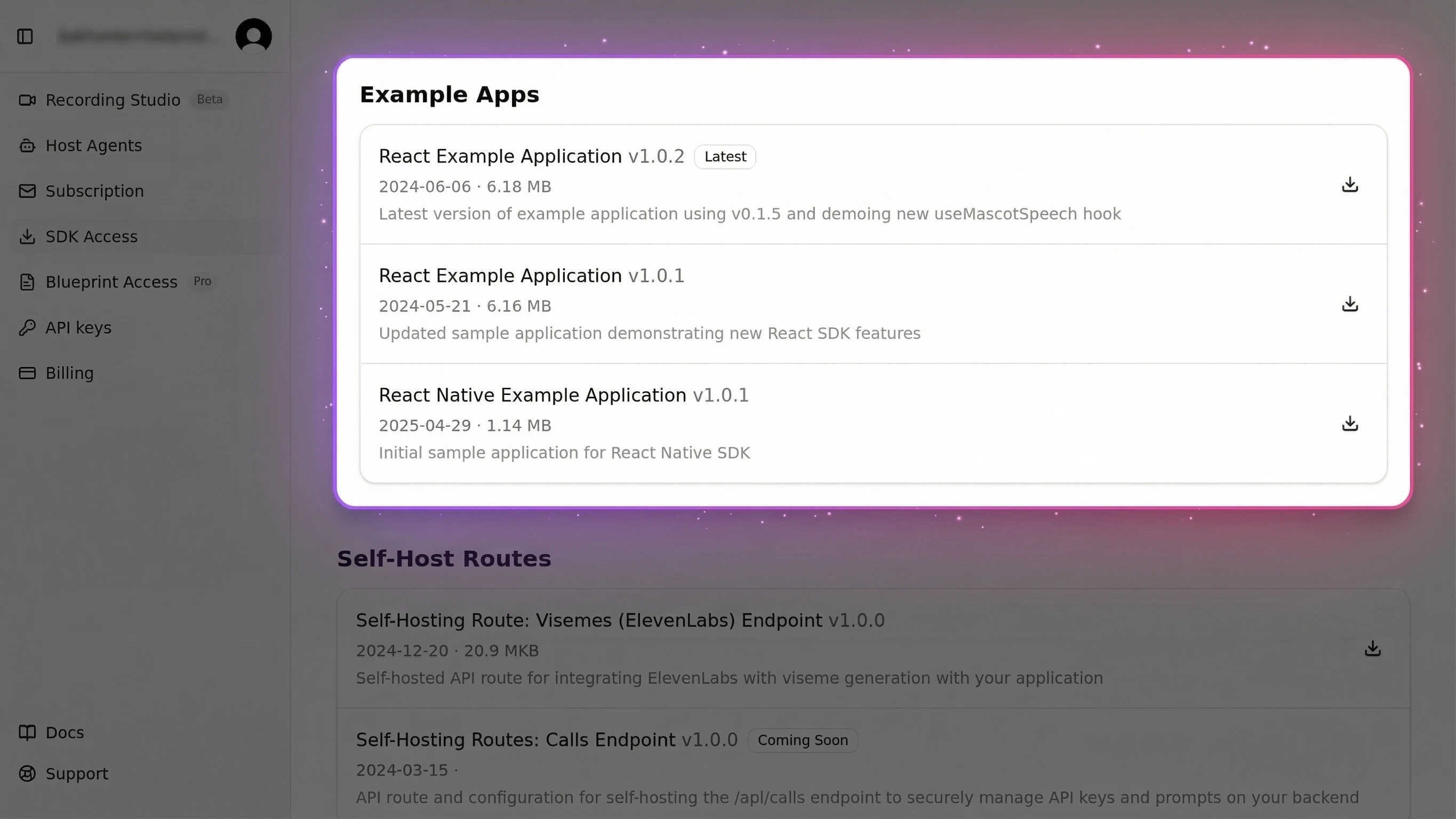

Next Steps

Now that you have a working 2D avatar SDK integration with lip sync and voice AI, here is where to go next:

- SDK Quick Start guide -- The minimal 10-minute version if you want the fastest path to a demo

- ElevenLabs Avatar tutorial -- Deep integration guide for production voice applications

- Lip Sync API tutorial -- Technical deep-dive into viseme mapping, phoneme detection, and animation performance

- Custom brand mascot guide -- Complete guide to designing and importing your own 2D brand character

I just want to try it. Show me the fastest path to a working demo.

Clone the starter template and have a talking avatar running in under 5 minutes:

git clone https://github.com/mascotbot-templates/elevenlabs-avatar.git

cd elevenlabs-avatar

pnpm install && pnpm install ./mascotbot-sdk-react-0.1.8.tgz

cp .env.example .env.local

# Add your API keys to .env.local

pnpm develevenlabs-avatar

Starter template for the MascotBot 2D Avatar SDK — includes ElevenLabs voice integration, lip sync, and ready-to-deploy configuration.

Frequently Asked Questions

What is a 2D avatar SDK?

A 2D avatar SDK is a developer toolkit for adding animated, stylized characters to apps and websites. Unlike 3D avatar SDKs that render photorealistic human models, 2D avatar SDKs power cartoon-style mascots with real-time lip sync, facial expressions, and voice AI integration. MascotBot is the leading 2D avatar SDK, supporting React, Flutter, and vanilla JavaScript.

How does lip sync work in 2D avatars?

Lip sync in 2D avatars works by converting audio input into phonemes (speech sounds), mapping those phonemes to visemes (mouth shapes), and rendering the corresponding mouth animation in real time. MascotBot uses 16 viseme shapes rendered through Rive animation at 120fps, achieving under 100ms latency from audio input to visual output.

2D vs 3D avatar -- which is better for my app?

Choose 2D for brand mascots, chatbots, voice agents, and performance-sensitive applications. 2D avatars render at 120fps with character files under 500KB. Choose 3D for photorealistic presenters, VR/AR, or video generation where human likeness matters more than performance and brand customization.

How to integrate an avatar SDK with React?

Install the MascotBot React SDK, import MascotProvider, MascotClient, and useRive, then render the character component. Connect an audio source for lip sync and set expressions programmatically. The full integration takes under 15 minutes with zero native dependencies. See Step 2 above for the complete code.

Can I use my own character design with a 2D avatar SDK?

Yes. MascotBot serves as a 2D avatar creator that supports any Rive character. Design your character in Rive, set up the 16 required mouth shapes and expression states, export the .riv file, and load it into MascotBot. Any 2D character that follows the MascotBot Rive blueprint works with full lip sync support.

What does a 2D avatar SDK cost?

MascotBot offers a free developer tier for prototyping and testing. Production pricing starts at approximately $0.04 per minute of avatar interaction -- 3-5x cheaper than photorealistic avatar platforms like HeyGen ($0.10-0.20/min) or Synthesia ($0.20/min). Volume discounts are available for enterprise deployments.