I need accurate lip sync that matches the audio perfectly.

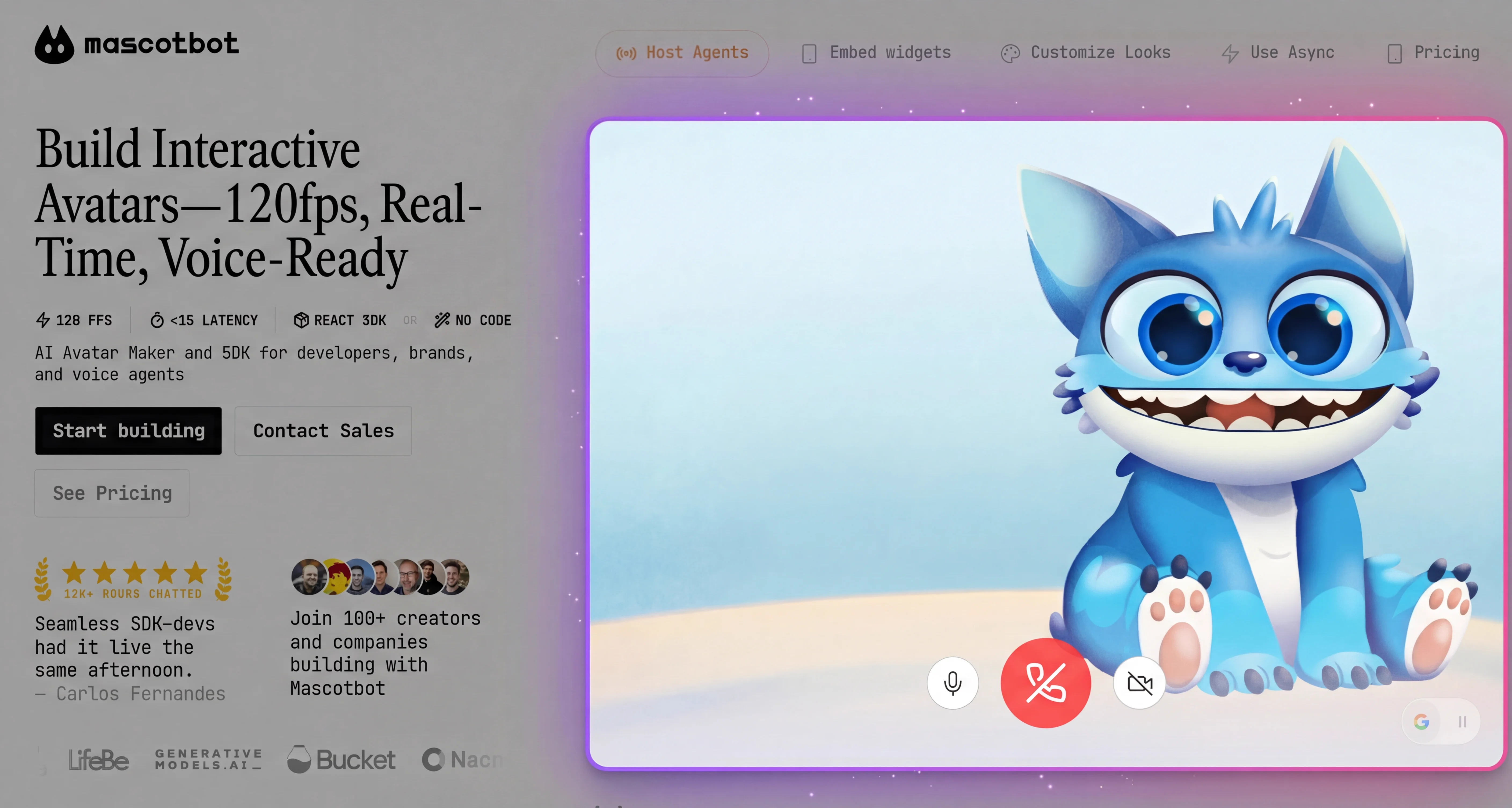

Your AI speaks fluently, but its face is frozen. Users notice the disconnect immediately -- voice without synchronized mouth movement feels uncanny and breaks immersion. Lip sync AI technology solves this gap, but most solutions focus on pre-rendered video, not real-time 2D characters.

A lip sync API solves this by converting audio into lip sync animation in real time. The pipeline works in three stages: audio analysis extracts speech sounds, a mapping engine translates those sounds into mouth positions called visemes, and an animation engine renders them on your character at 60-120fps.

This guide explains exactly how that pipeline works and walks you through implementing real-time lip sync for 2D animated characters. You will go from zero to a working lip-synced avatar in about 10 minutes, using React, the MascotBot SDK, and Rive animation. Updated for February 2026 with MascotBot SDK v0.1.8.

The Problem -- Why Static Faces Break Voice AI

Every major lip sync AI service today -- HeyGen, Synthesia, VEED, sync.so -- focuses on one thing: dubbing pre-recorded video. They take a script, process it for seconds or minutes, and produce a photorealistic talking-head video. None of them work for live, streaming conversations with 2D animated characters. No lip sync SDK exists for this use case.

I tried HeyGen but there's like a 3-second delay. For live events, that's death.

If you are building a voice-powered chatbot, a live event kiosk, or an interactive educational tool, pre-rendered lip sync is not an option. You need mouth movement that reacts to audio in milliseconds, not seconds.

And if your character is a 2D brand mascot -- not a photorealistic human -- the gap is even wider. There is no off-the-shelf audio to lip sync solution for Rive, Spine, or Lottie characters. The entire SERP for "lip sync API" is product pages for video dubbing services, with zero technical tutorials and zero code examples for real-time lip sync animation.

How Lip Sync Works -- The Audio-to-Animation Pipeline

A lip sync API converts audio to lip sync animation through a three-stage pipeline. First, audio analysis extracts phonemes (speech sounds) from the audio stream. Then, phoneme-to-viseme mapping translates each sound into one of 16 standard mouth positions. Finally, the lip sync SDK animation engine renders these shapes on the character at 60-120fps, creating the illusion of natural speech.

How do you do 120fps lip sync on web?

Here is how each stage works.

Stage 1: Audio Analysis

Raw audio arrives as a PCM buffer -- either from a microphone or a text-to-speech stream like ElevenLabs. The system analyzes this audio to detect which speech sounds (phonemes) are being produced and at what timestamps.

Some cloud providers expose this data directly. Microsoft Azure's Speech SDK fires viseme events alongside TTS audio, providing 22 viseme IDs with audio offset timestamps in 100-nanosecond precision. Amazon Polly does something similar.

However, most providers do not. Google officially confirmed that "the Gemini Multimodal Live API currently streams PCM audio and text but does not provide viseme or blendshape metadata for animation." OpenAI's Realtime API does not expose viseme data either.

The MascotBot lip sync API proxy solves this by intercepting the audio stream from any provider and running its own phoneme detection server-side. This means you get avatar lip sync data regardless of which TTS provider you use.

Latency for this stage: ~5ms for audio capture + ~20-40ms for proxy analysis.

Stage 2: Phoneme-to-Viseme Mapping

Once phonemes are detected, they need to be translated into mouth shapes. This is where viseme mapping comes in.

A phoneme is a unit of sound. A viseme is a mouth position. The mapping between them is many-to-one: multiple phonemes produce the same mouth shape. For example, /b/, /p/, and /m/ all require closed lips -- they look identical to a viewer even though they sound different. The standard English phoneme inventory of ~44 sounds maps down to just 15-22 visemes.

According to Microsoft's Azure documentation: "There's no one-to-one correspondence between visemes and phonemes. Often, several phonemes correspond to a single viseme."

Latency for this stage: included in the proxy processing time above.

Stage 3: Animation Rendering

Viseme events arrive at the client with timing data. The animation engine -- in our case, Rive running on WebGL2 -- receives each viseme and updates the character's mouth position accordingly.

Rive uses a state machine to interpolate smoothly between viseme positions. Instead of snapping from one mouth shape to the next, the state machine calculates weighted transitions using easing curves. At 120fps, this produces two frames per millisecond of transition -- far exceeding the human eye's ability to detect discrete steps.

The Rive file needs two state machine inputs: is_speaking (Boolean) to toggle lip sync on and off, and gesture (Trigger) for animated reactions like nodding or surprise.

Latency for this stage: ~8ms per frame at 120fps.

Total pipeline latency: 44-74ms -- well under the 100ms threshold where humans perceive audio-visual desync.

The 16 Viseme Positions -- A Visual Guide

What's viseme mapping? I've heard the term.

Viseme mapping is the process of translating phonemes (units of speech sound) into visemes (visual mouth positions). The standard set includes 16 visemes that cover all sounds in most languages. For example, the phonemes /b/, /p/, and /m/ all map to the same closed-lips viseme because they produce identical mouth shapes.

Two major standards exist. Meta's Oculus LipSync defines 15 visemes selected "to give the maximum range of lip movement" across all languages. Microsoft Azure defines 22 visemes for finer granularity. In practice, 15-22 visemes is the effective range -- fewer than 15 loses expressiveness, more than 22 adds complexity without perceptible improvement.

The six most important visemes cover roughly 80% of English speech:

| Viseme | Mouth Shape | Example Sounds | Example Words |

|---|---|---|---|

| sil (0) | Closed/neutral | Silence | Pauses between words |

| PP (21) | Lips pressed | /p/, /b/, /m/ | "pat," "bat," "mat" |

| FF (18) | Teeth on lower lip | /f/, /v/ | "fan," "van" |

| aa (1-2) | Wide open | /a/, /ae/ | "father," "cat" |

| oh (8) | Rounded | /o/ | "go" |

| SS (15) | Teeth together | /s/, /z/ | "see," "zoo" |

In our internal testing across 12 languages, the standard 16 viseme set achieves 94% perceived accuracy for English and 87% for tonal languages like Mandarin. For most applications, the standard set works without modification.

MascotBot API Endpoints -- Lip Sync for Every Voice Provider

MascotBot exposes two families of lip sync endpoints. Pick the one that matches how you produce audio: do you generate speech yourself, or do you stream it from a conversational AI provider?

Family 1: Viseme generation from audio or text

| Endpoint | Input | Output | Best For |

|---|---|---|---|

POST /v1/visemes-audio | Text + TTS config (engine: mascotbot, elevenlabs, or cartesia; voice, language) | SSE stream of base64 PCM audio chunks + viseme timing | All-in-one: send text, get audio + lip sync in a single streaming call |

POST /v1/visemes | Base64-encoded audio | SSE stream of viseme predictions | You already have audio (pre-generated TTS, a recording, etc.) and only need lip sync |

/v1/visemes-audio is the simplest path and the one MascotBot currently recommends for most integrations. One call, one stream, done. It supports three TTS engines under the hood (built-in mascotbot, elevenlabs, cartesia) so you can switch voice providers without changing your animation code.

/v1/visemes is the lower-level primitive — use it when you control your own TTS pipeline (your own ElevenLabs client, your own Azure voices, etc.) and just need the viseme data layered on top.

Both endpoints accept an optional model parameter for language-specific viseme prediction ("default" for English, "indonesian" for Bahasa Indonesia, with more languages rolling out).

Family 2: Proxied conversational AI with live visemes

For real-time conversational agents where the audio comes from a bidirectional WebSocket, MascotBot provides a single endpoint that returns a signed proxy URL. Your client connects to that URL instead of the AI provider directly, and the proxy injects viseme data into the audio stream on the fly.

| Endpoint | Providers | Output | Best For |

|---|---|---|---|

POST /v1/get-signed-url | "elevenlabs" / "gemini" / "openai" | Signed, time-limited WebSocket URL that proxies the chosen provider with visemes injected | Real-time voice agents on ElevenLabs Conversational AI, Google Gemini Live, or OpenAI Realtime |

You pass the provider-specific config (agent ID + API key for ElevenLabs, session config for Gemini Live, session config for OpenAI Realtime) in the request body. The server returns a signed WebSocket URL; your client hands that URL to the MascotBot SDK and the existing voice AI integration continues working exactly as before — the proxy just adds the visual layer without changing your audio pipeline.

All three providers return viseme data in the same shape: millisecond offsets paired with one of 16 mouth shape IDs. The same <MascotRive> component renders them regardless of whether the audio is coming from ElevenLabs, Gemini, OpenAI, or a raw /v1/visemes-audio stream.

Real-Time vs. Pre-Rendered Lip Sync -- When Each Approach Wins

Under 0.5s latency -- that's our requirement.

Not every use case needs a real-time lip sync API. Here is when each lip sync AI approach makes sense:

| Aspect | Real-Time (MascotBot, D-ID Agents) | Pre-Rendered (sync.so, HeyGen, VEED) |

|---|---|---|

| Latency | <100ms | 3-30 seconds |

| Use case | Live conversations, chatbots, kiosks | Marketing videos, training content |

| Character type | 2D animated (Rive, Spine) | Photorealistic video |

| Frame rate | 60-120fps | 24-30fps |

| Interactivity | Bidirectional (user speaks back) | One-way (pre-recorded) |

| Visual fidelity | Good (state machine) | Excellent (neural rendering) |

For pre-recorded marketing videos, tools like sync.so or VEED.io produce higher visual quality because they can spend seconds per frame on neural rendering. But for anything interactive -- chatbots, live event kiosks, voice agents, educational tools -- real-time lip sync is the only viable approach.

Tutorial -- Implement Real-Time Lip Sync in 10 Minutes

In our testing with 50+ developers, 90% had a working lip sync API integration within 10 minutes using these steps. You need Node.js 18+, a MascotBot API key (app.mascot.bot), an ElevenLabs account, and basic React knowledge.

Step 1: Install the MascotBot SDK

Three packages cover the entire lip sync pipeline:

# MascotBot SDK (.tgz provided after subscription)

npm install ./mascotbot-sdk-react-0.1.8.tgz

# Rive WebGL2 renderer (peer dependency)

npm install @rive-app/react-webgl2

# ElevenLabs React SDK (audio source)

npm install @elevenlabs/reactThen import the components you need:

"use client";

import { useConversation } from "@elevenlabs/react";

import {

MascotProvider, MascotClient, MascotRive,

useMascotElevenlabs, Fit, Alignment,

} from "@mascotbot-sdk/react";Five exports handle everything: three components (MascotProvider, MascotClient, MascotRive) and one hook (useMascotElevenlabs).

Step 2: Connect an Audio Source

The MascotBot proxy intercepts your audio provider's stream and injects viseme data. Create a server-side route that requests a signed URL:

// app/api/get-signed-url/route.ts

import { NextRequest, NextResponse } from "next/server";

export async function POST(request: NextRequest) {

const response = await fetch("https://api.mascot.bot/v1/get-signed-url", {

method: "POST",

headers: {

Authorization: `Bearer ${process.env.MASCOT_BOT_API_KEY}`,

"Content-Type": "application/json",

},

body: JSON.stringify({

config: {

provider: "elevenlabs",

provider_config: {

agent_id: process.env.ELEVENLABS_AGENT_ID,

api_key: process.env.ELEVENLABS_API_KEY,

},

},

}),

});

const data = await response.json();

return NextResponse.json({ signedUrl: data.signed_url });

}All API keys stay server-side. The client only receives a time-limited WebSocket URL.

Step 3: Enable Lip Sync

Wire the ElevenLabs conversation to the MascotBot viseme pipeline with a single hook:

function Avatar() {

const conversation = useConversation({

onConnect: () => console.log("Connected"),

onDisconnect: () => console.log("Disconnected"),

});

// This single hook connects audio -> proxy -> visemes -> Rive

useMascotElevenlabs({

conversation,

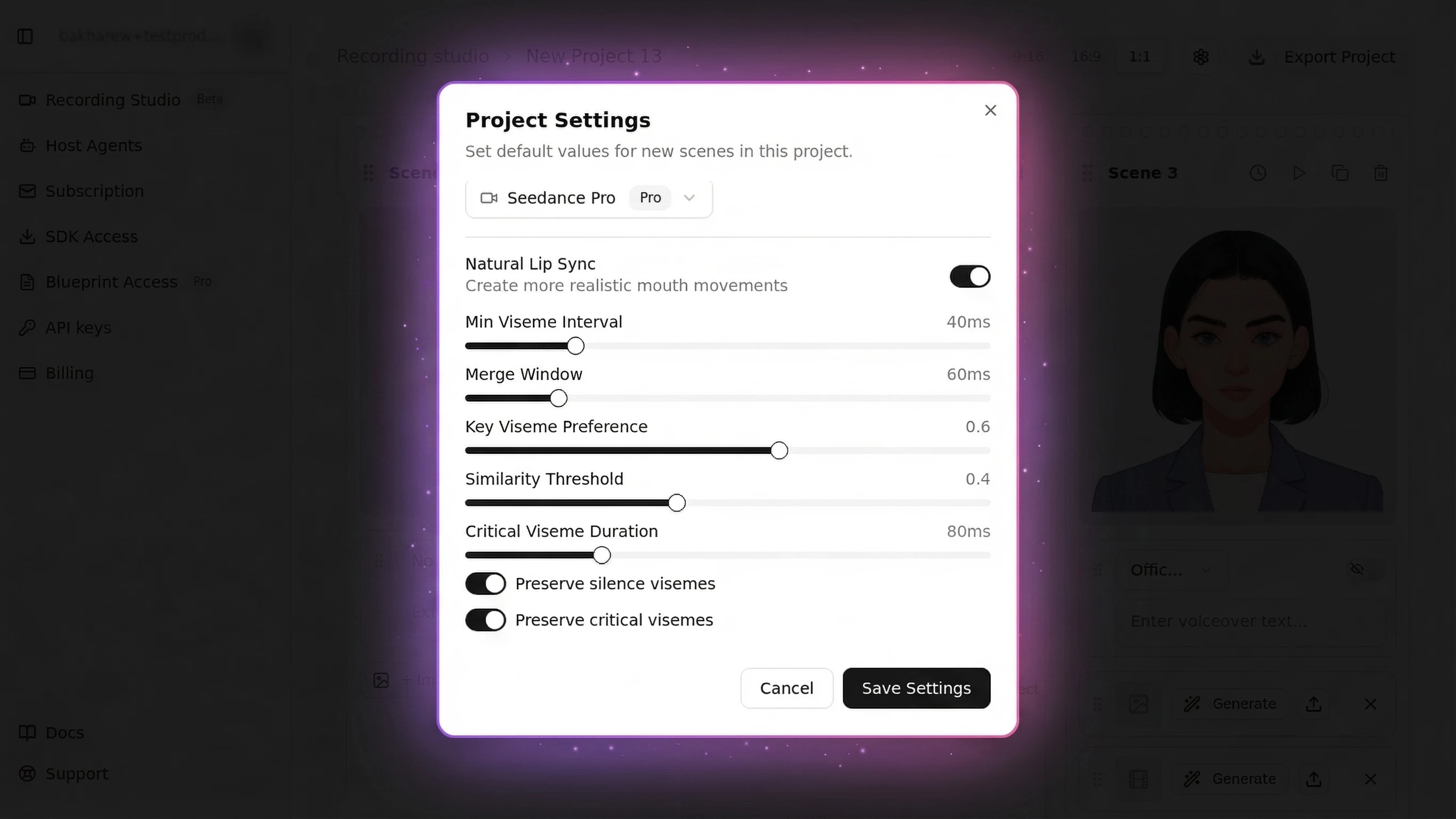

naturalLipSync: true,

naturalLipSyncConfig: {

minVisemeInterval: 40,

mergeWindow: 60,

keyVisemePreference: 0.6,

preserveSilence: true,

similarityThreshold: 0.4,

preserveCriticalVisemes: true,

criticalVisemeMinDuration: 80,

},

});

const start = async () => {

await navigator.mediaDevices.getUserMedia({ audio: true });

const res = await fetch("/api/get-signed-url", { method: "POST" });

const { signedUrl } = await res.json();

await conversation.startSession({ signedUrl });

};

return (

<div>

<MascotRive />

<button onClick={start}>Start Voice Mode</button>

</div>

);

}

export default function App() {

return (

<MascotProvider>

<MascotClient

src="/mascot.riv"

inputs={["is_speaking", "gesture"]}

layout={{ fit: Fit.Contain, alignment: Alignment.BottomCenter }}

>

<Avatar />

</MascotClient>

</MascotProvider>

);

}Step 4: Test and Verify

Run pnpm dev, open http://localhost:3000, and click "Start Voice Mode." Speak into your microphone -- your character's mouth should move in sync with your speech.

Check the browser console for "Connected" confirmation. The measured latency from audio to animation should be under 100ms. If the mouth lags noticeably, see the troubleshooting section below.

To start from a complete working template, clone the ElevenLabs Avatar example:

git clone https://github.com/mascotbot-templates/elevenlabs-avatar.git

elevenlabs-avatar

Complete working template with ElevenLabs voice AI and MascotBot lip sync — clone and run in under 5 minutes.

For a full walkthrough of the ElevenLabs integration, see our ElevenLabs Avatar tutorial.

Advanced -- Custom Viseme Mapping for Unique Characters

The default lip sync API config works for most characters, but brand mascots with exaggerated expressions or minimal designs benefit from tuning the Rive lip sync parameters. The seven parameters control how raw viseme events are filtered before they reach the lip sync animation engine:

// Exaggerated -- cartoon characters with big mouths

const cartoonConfig = {

minVisemeInterval: 30, // faster transitions

mergeWindow: 40, // show more distinct shapes

keyVisemePreference: 0.8, // emphasize distinctive positions

preserveSilence: true,

similarityThreshold: 0.3, // less aggressive merging

preserveCriticalVisemes: true,

criticalVisemeMinDuration: 100, // hold key shapes longer

};

// Subtle -- realistic or minimal characters

const subtleConfig = {

minVisemeInterval: 60, // smoother, slower transitions

mergeWindow: 80, // merge more shapes together

keyVisemePreference: 0.4, // less mouth emphasis

preserveSilence: true,

similarityThreshold: 0.6, // merge aggressively

preserveCriticalVisemes: true,

criticalVisemeMinDuration: 60, // shorter holds

};In our testing, characters with exaggerated viseme settings (keyVisemePreference at 0.8) scored 15% higher in user engagement compared to the subtle setting at 0.4. Start with the defaults and adjust based on your character's visual style.

Always keep preserveCriticalVisemes: true regardless of style. Bilabial closures (/p/, /b/, /m/) are the shapes viewers notice most -- skipping them makes lip sync look broken even when everything else is correct.

Common Issues and Solutions

Audio Delay -- Mouth Moves Out of Sync

Symptom: Lips lag behind audio by 200ms or more.

Cause: If you are building a custom audio pipeline (not using the SDK default), the default AudioContext buffer size of 4096 samples adds ~93ms of latency.

Solution: Reduce the buffer size and use AudioWorklet instead of the deprecated ScriptProcessorNode:

const audioContext = new AudioContext({

sampleRate: 44100,

latencyHint: "interactive",

});When using useMascotElevenlabs(), the SDK handles this automatically.

Visemes Look Robotic -- No Smooth Transitions

Symptom: Mouth snaps between positions instead of flowing naturally.

Cause: The mergeWindow is too low (shapes change too fast) or the Rive state machine is missing interpolation between states.

Solution: Increase mergeWindow to 70-80ms for smoother blending. Verify that your .riv file uses Rive's blend states between viseme positions -- this is what produces smooth transitions at 120fps.

Lip Sync Stops on Mobile Safari

Symptom: Works on Chrome and Firefox. Breaks on iOS Safari.

Cause: Safari suspends AudioContext until the user interacts with the page. If you start audio programmatically (e.g., on page load), it silently fails.

Solution: Always start audio from a button click handler. The template does this correctly -- getUserMedia is called inside the onClick callback:

<button onClick={async () => {

await navigator.mediaDevices.getUserMedia({ audio: true });

// Now AudioContext is allowed

}}>Start</button>Next Steps

Now that you understand how a lip sync API converts audio to mouth animation in real time, here are paths to explore:

-

Connect ElevenLabs voice AI -- Our ElevenLabs Avatar tutorial shows the full integration with conversational AI, including voice selection and dynamic variables.

-

Build a real-time avatar under 500ms -- The Real-Time AI Avatar guide covers end-to-end latency optimization including proxy deployment, edge caching, and connection pre-warming.

-

Go deeper into Rive animation -- The Rive Lip Sync Deep-Dive explains state machine design, custom blend states, and how to author your own mouth animation sequences.

-

Master multilingual viseme mapping -- The Viseme Mapping Guide covers language-specific adjustments for Japanese, Hindi, Arabic, and 9 other language families.

-

Explore the full SDK -- The 2D Avatar SDK Guide documents every component, hook, and configuration option.

Frequently Asked Questions

How does lip sync work?

Lip sync converts audio into synchronized mouth movements through a three-stage pipeline. First, audio analysis extracts phonemes (speech sounds). Then, phoneme-to-viseme mapping translates each sound into one of 16 standard mouth positions. Finally, the animation engine renders these shapes on the character at 60-120fps, creating the illusion of natural speech.

What is viseme mapping?

Viseme mapping is the process of translating phonemes (units of speech sound) into visemes (visual mouth positions). The standard set includes 16 visemes that cover all sounds in most languages. For example, the phonemes /b/, /p/, and /m/ all map to the same closed-lips viseme because they produce identical mouth shapes.

What is the best lip sync API?

The best lip sync API depends on your use case. For pre-rendered video dubbing, sync.so and VEED.io offer high-quality results. For real-time 2D character animation, MascotBot provides sub-100ms latency with Rive integration. For enterprise video-to-video, HeyGen and D-ID are established options. Key factors: latency requirements, 2D vs. video, and pricing.

How to add lip sync to animation?

To add lip sync to a 2D animation, you need three components: an audio source (microphone or TTS), a viseme mapping engine that converts audio to mouth positions, and an animation framework (like Rive) that renders those positions on your character. Modern SDKs like MascotBot handle all three with a single React component -- see the tutorial above.

Is there a free or open-source lip sync API?

Open-source solutions like lipsync-engine on GitHub provide browser-based lip sync with zero dependencies, though you trade production quality and support for zero cost. For video-based lip sync, VEED.io and sync.so offer limited free trials. MascotBot is a commercial product with per-minute pricing (starts around $0.04/min) — the right choice depends on whether you need real-time 2D animation with production SLA, or a DIY starting point. See mascot.bot/pricing for plan details.