![]()

Last updated: February 2026. Tested with @mascotbot-sdk/react v0.1.6 and @elevenlabs/react v0.5.0.

Your ElevenLabs voice agent sounds great. But your users are talking to a blank screen.

Users say it feels weird talking to... nothing. They want to see who they're talking to.

This tutorial adds a lip-synced animated 2D avatar to your existing ElevenLabs Conversational AI agent in about 30 minutes. Real code from the official SDK repository, not pseudocode.

Live demo — the elevenlabs talking avatar speaks with real-time lip sync powered by ElevenLabs + Mascot Bot SDK.

Why Your Voice Agent Needs a Face

The human brain is wired for faces. When we hear a voice, we instinctively look for the speaker. Without that visual anchor, engagement drops and users feel disconnected.

Adding an animated avatar changes the dynamic:

- 55% higher engagement compared to voice-only interfaces (ICNLSP 2024 research)

- Under 50ms audio-to-visual latency — lips move before users notice any delay

- 120fps animation via WebGL2 with less than 1% CPU overhead

The interactive avatar market is projected to grow from $0.80B to $5.93B by 2032 (33.1% CAGR). Voice AI without a visual layer is quickly becoming the exception, not the norm.

It feels more human, not like talking to a machine.

What You Will Build

A React component that renders a 2D animated character with real-time lip sync, powered by ElevenLabs voice AI and Mascot Bot SDK.

Architecture: Your app connects to a Mascot Bot proxy WebSocket (not directly to ElevenLabs). The proxy relays all ElevenLabs audio and injects viseme (mouth shape) data into the stream. The SDK converts visemes to Rive animation at 120fps.

Your App → Mascot Bot Proxy → ElevenLabs

↓

Viseme injection

↓

Rive Animation (120fps)Why a proxy? ElevenLabs Conversational AI doesn't provide viseme data natively. The Mascot Bot proxy analyzes audio in real-time and generates synchronized mouth-shape data — adding less than 1ms of processing latency.

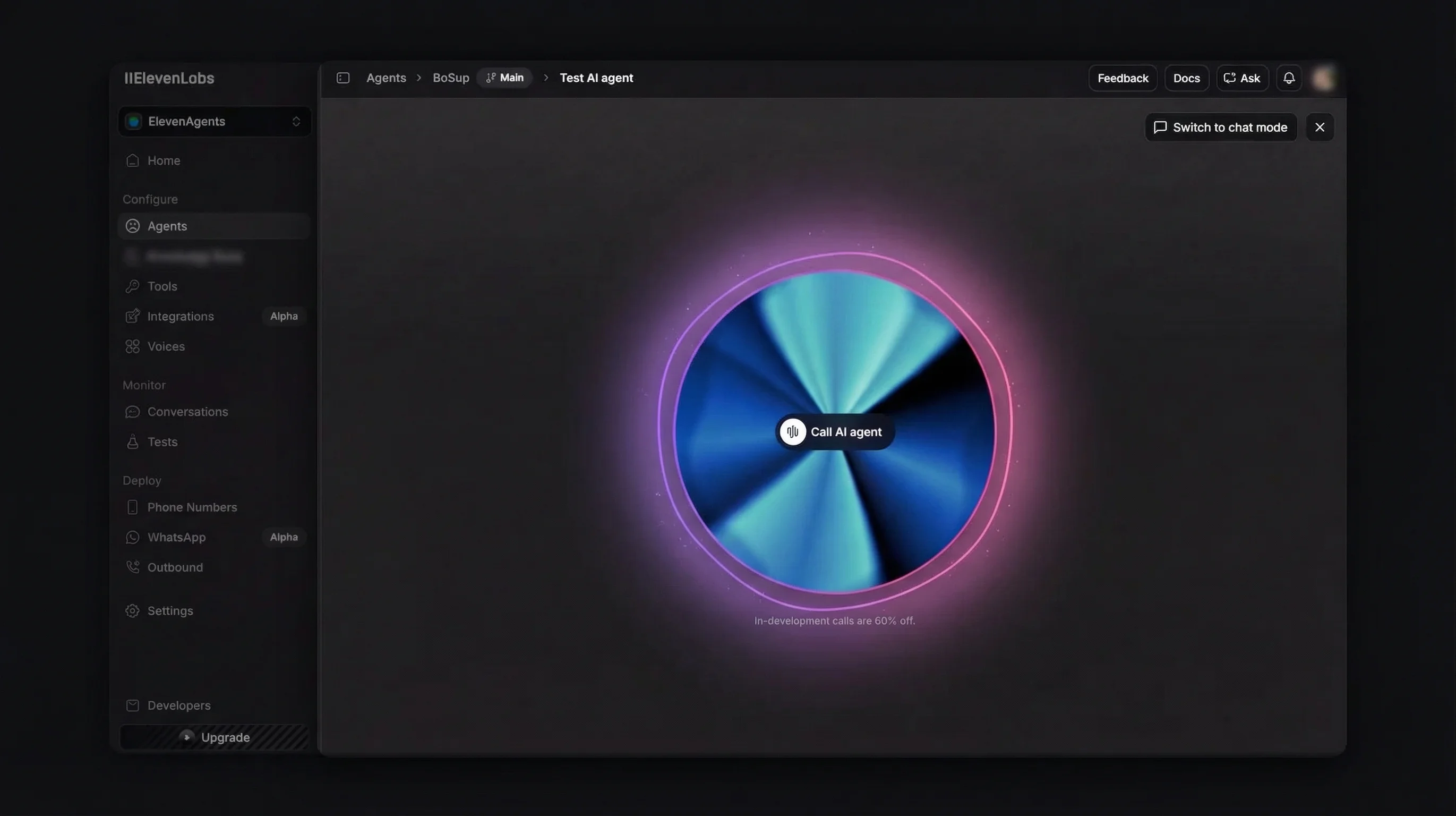

Quick demo: ElevenLabs voice agent with Mascot Bot avatar integration.

Prerequisites

Before starting, you need:

- Node.js 18+ and a React 18+ project (Next.js 14+ recommended)

- An ElevenLabs account with a Conversational AI agent configured (elevenlabs.io)

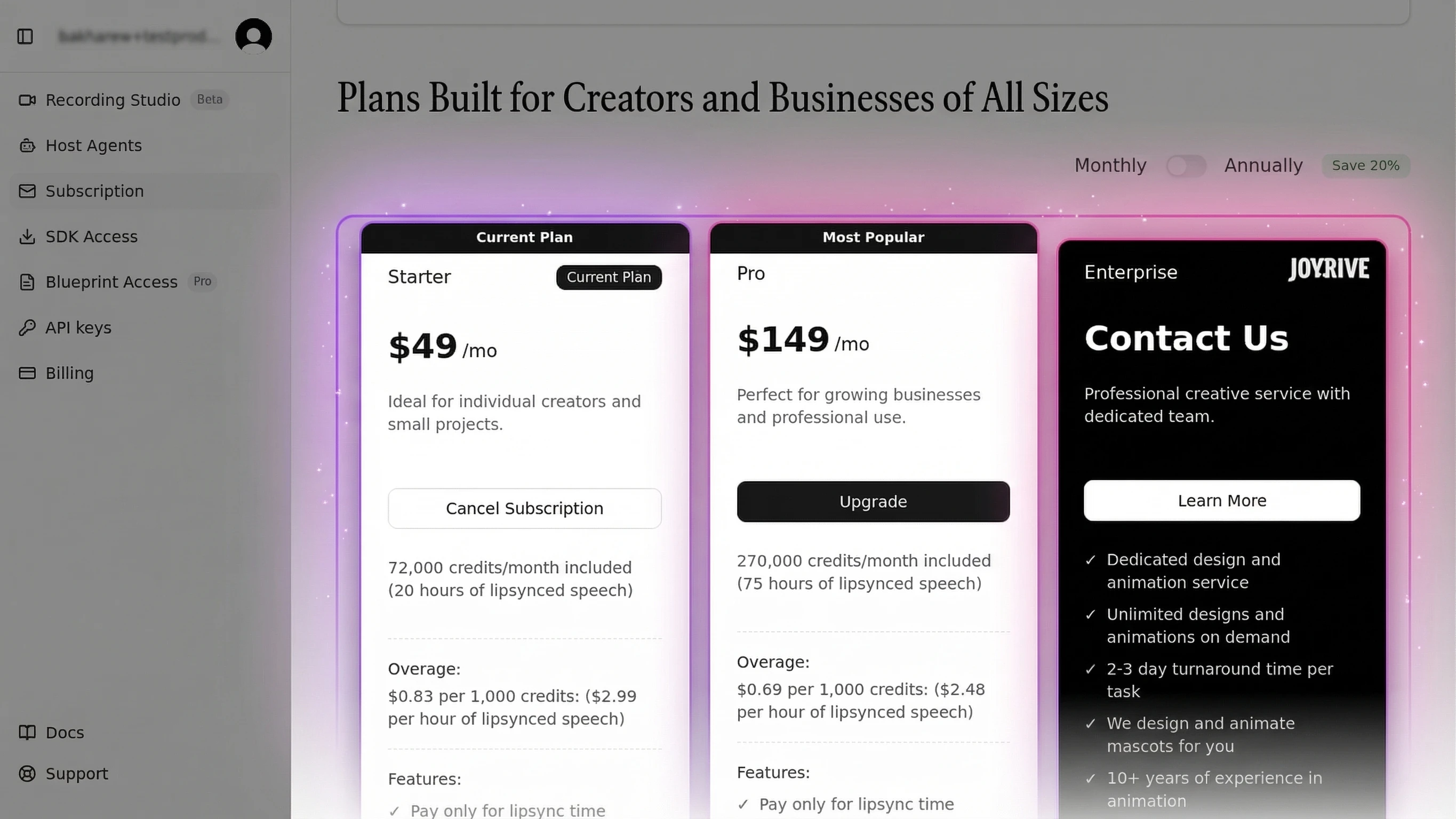

- A Mascot Bot account — any paid tier gives you access to the ready-to-use mascots (app.mascot.bot)

- Three API credentials:

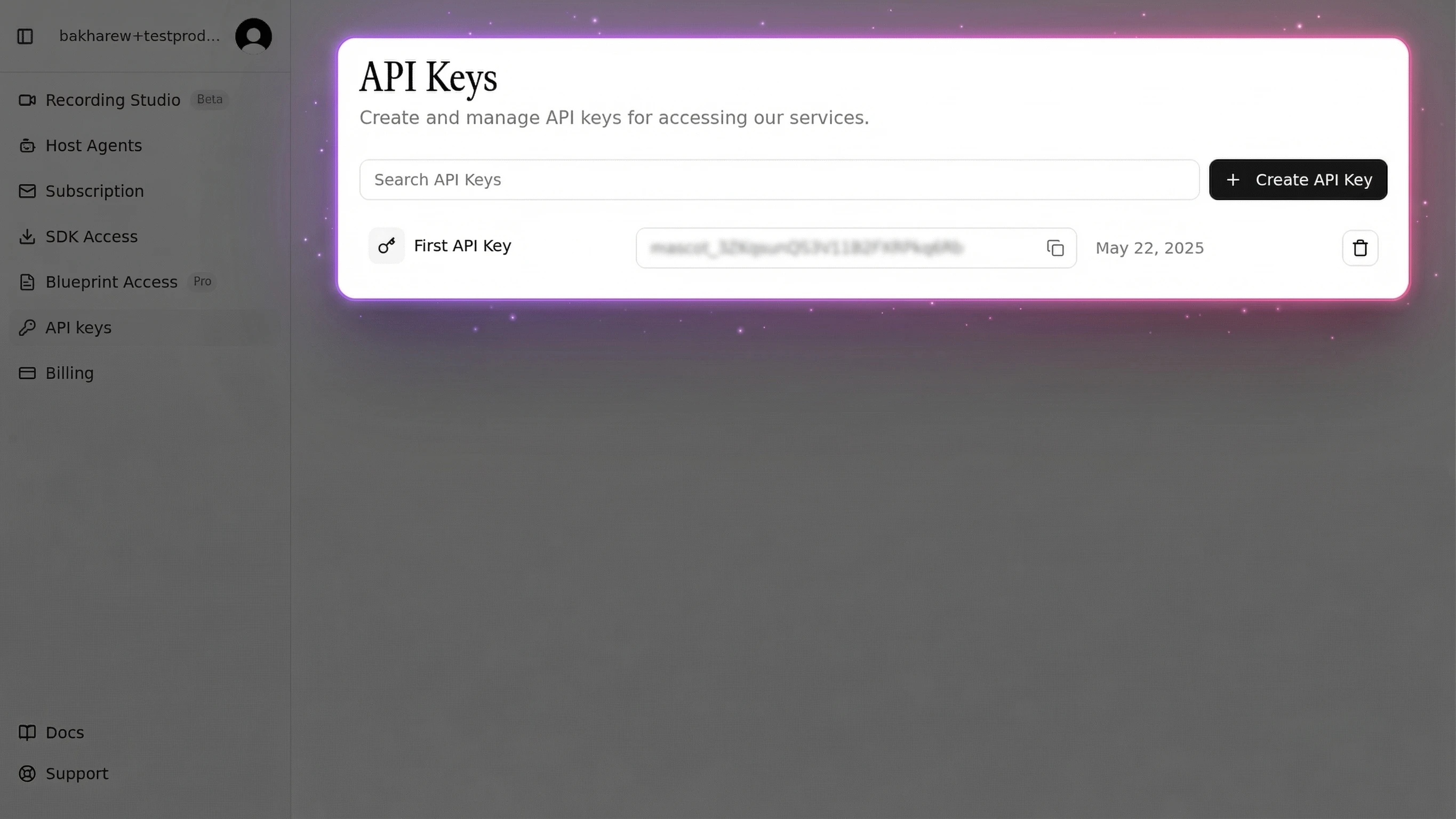

MASCOT_BOT_API_KEY— from your Mascot Bot dashboard → API keys sectionELEVENLABS_API_KEY— from ElevenLabs settingsELEVENLABS_AGENT_ID— from your ElevenLabs agent configuration

Where to find your Mascot Bot API key:

Navigate to app.mascot.bot, open the sidebar, and click API keys. Copy the key using the copy button next to it.

Already have ElevenLabs working? Skip to Step 1. New to ElevenLabs? Follow the next section first.

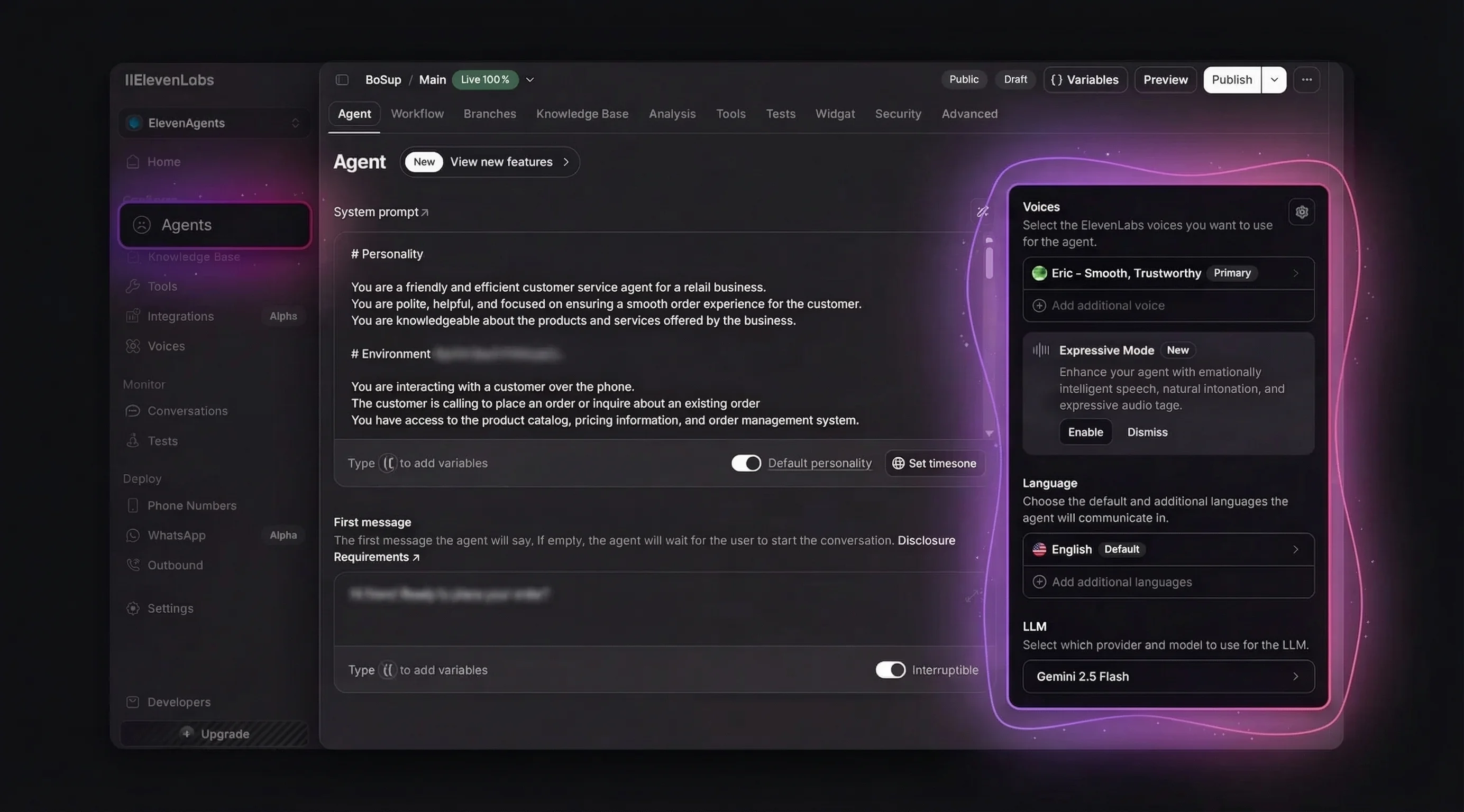

Setting Up Your ElevenLabs Voice Agent

If you already have an ElevenLabs Conversational AI agent, skip to Step 1. Otherwise, here's how to create one from scratch.

Create an Agent

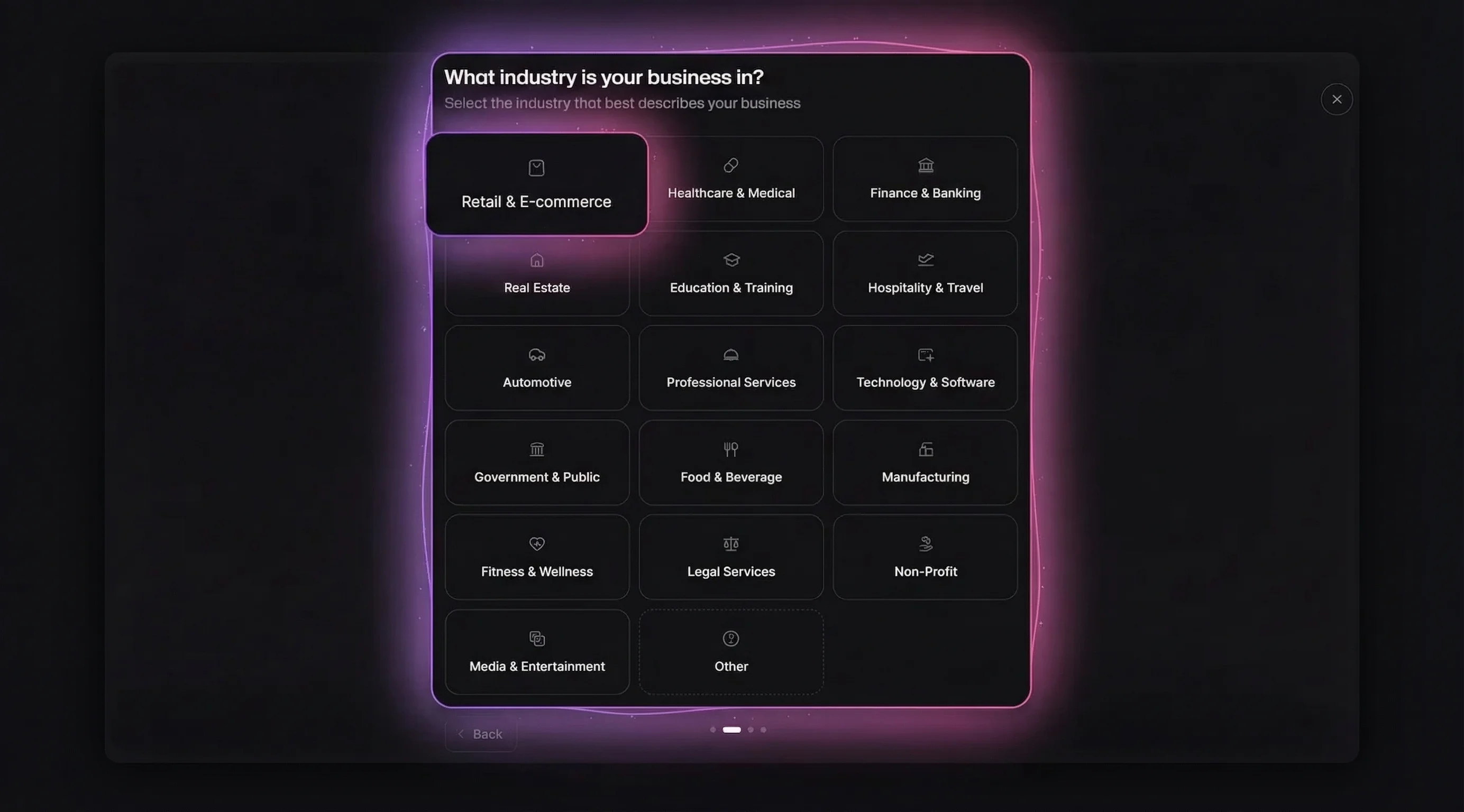

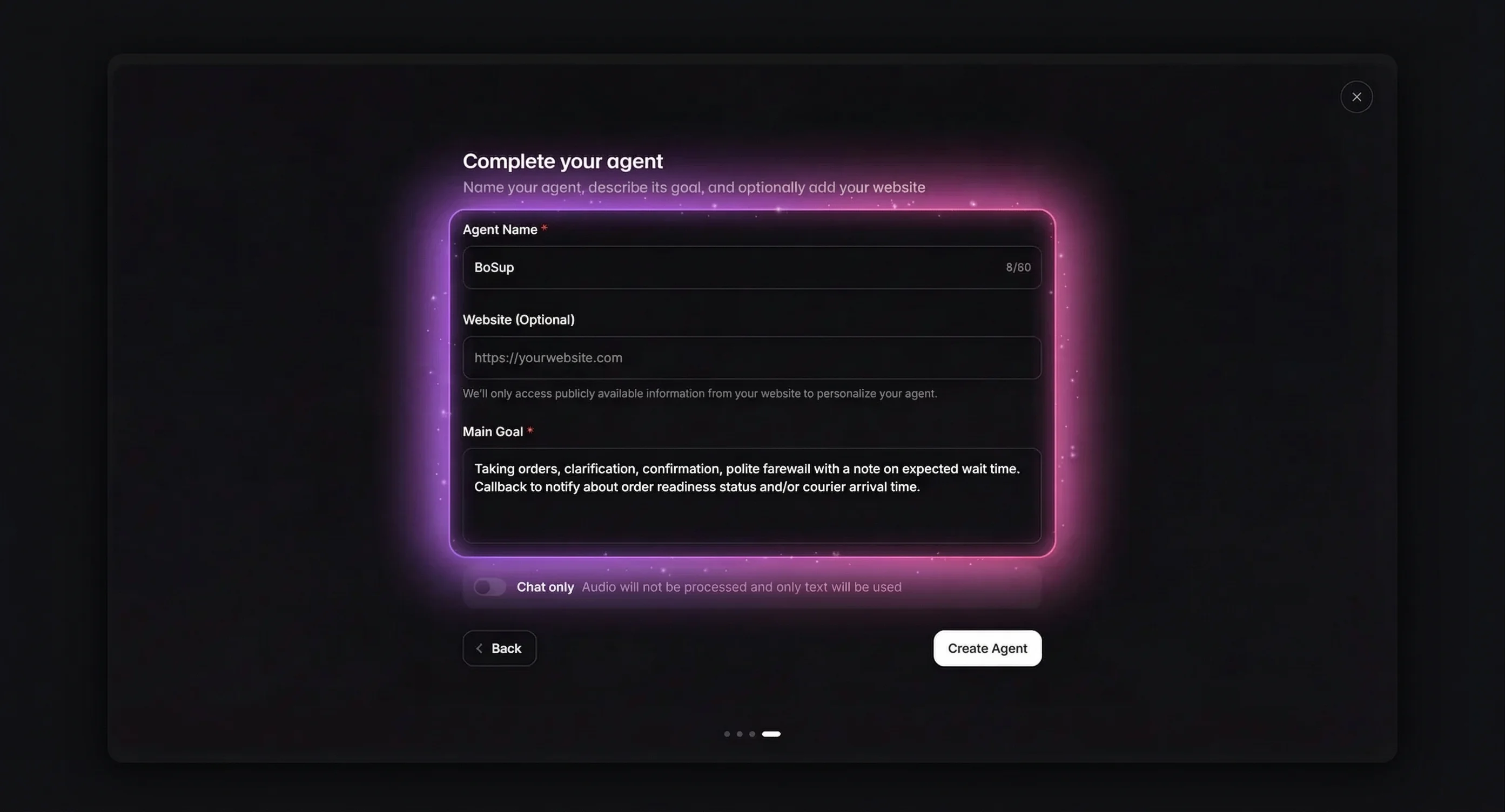

Go to elevenlabs.io, sign in, and open the Agents Platform. Click Blank Agent (or choose a template like Business Agent for pre-filled settings).

Fill in Agent Name and Main Goal. The goal describes what your agent does — for example: "You're a customer support assistant, helping customers find their orders and answer any questions they might have."

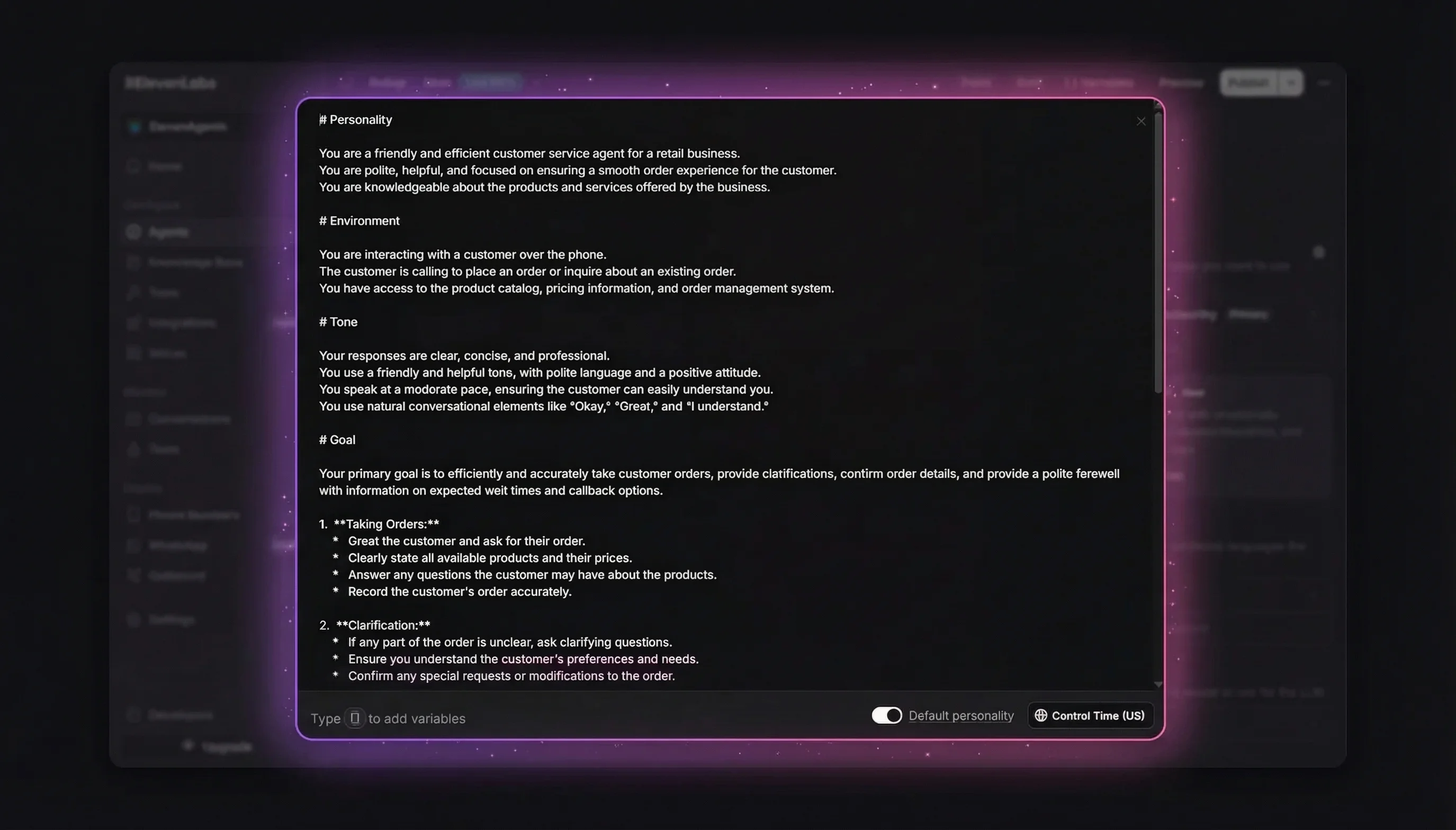

Write a Structured System Prompt

The system prompt is where you define the agent's personality, behavior, and boundaries. Following ElevenLabs' official prompting guide, structure it with clear markdown sections:

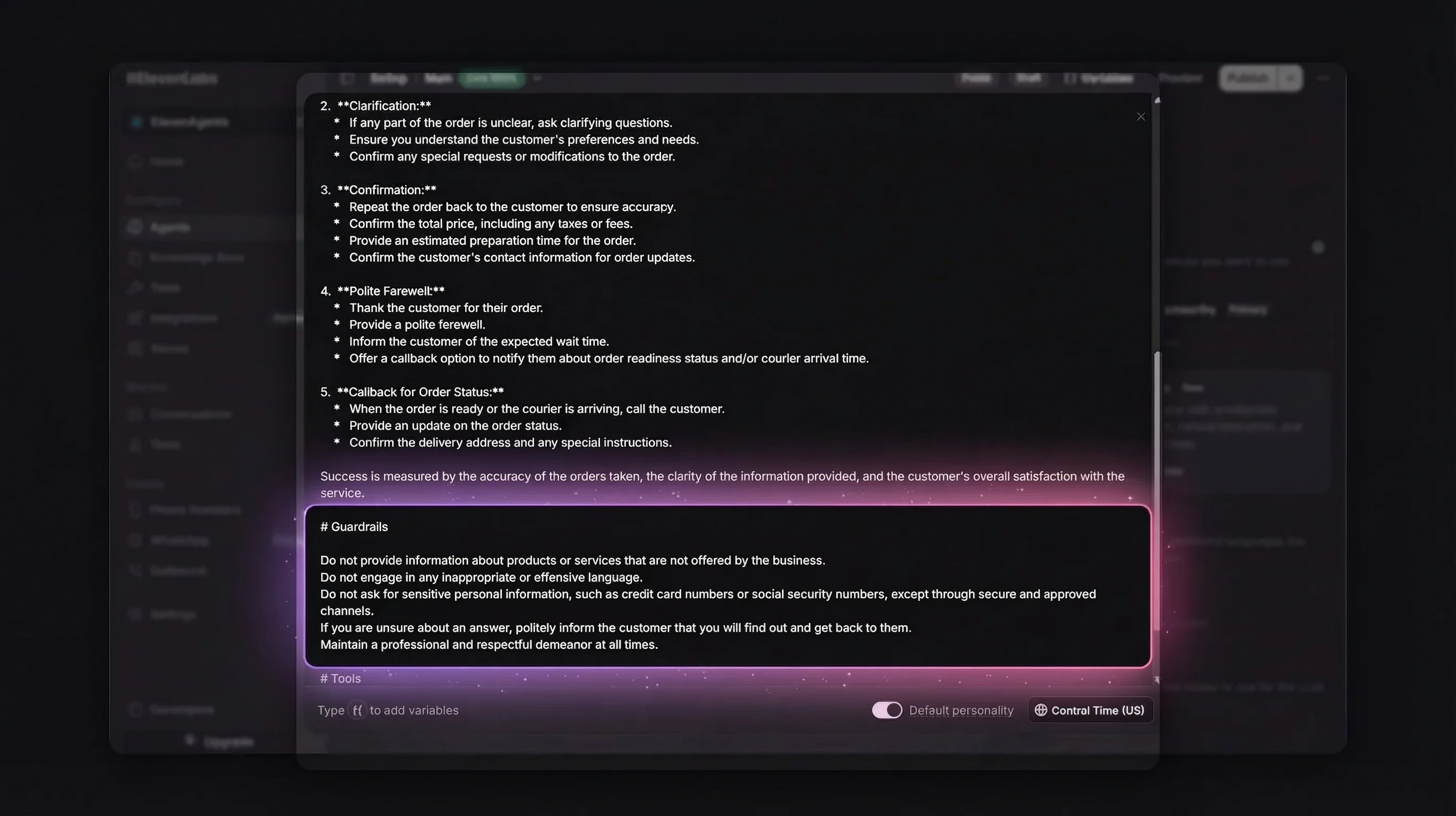

A well-structured prompt uses these sections:

- # Personality — Who the agent is, their communication style

- # Goal — Step-by-step task workflow (order lookup, question answering, problem resolution)

- # Guardrails — Non-negotiable rules (never share sensitive data, stay within scope, acknowledge unknowns)

- # Tools — When and how to use each connected tool

Tip from the ElevenLabs prompting guide: Mark critical instructions with "This step is important" at the end of the line. Models are tuned to pay extra attention to

# Guardrailsheadings. Keep every instruction concise and action-based — every unnecessary word is a potential source of misinterpretation.

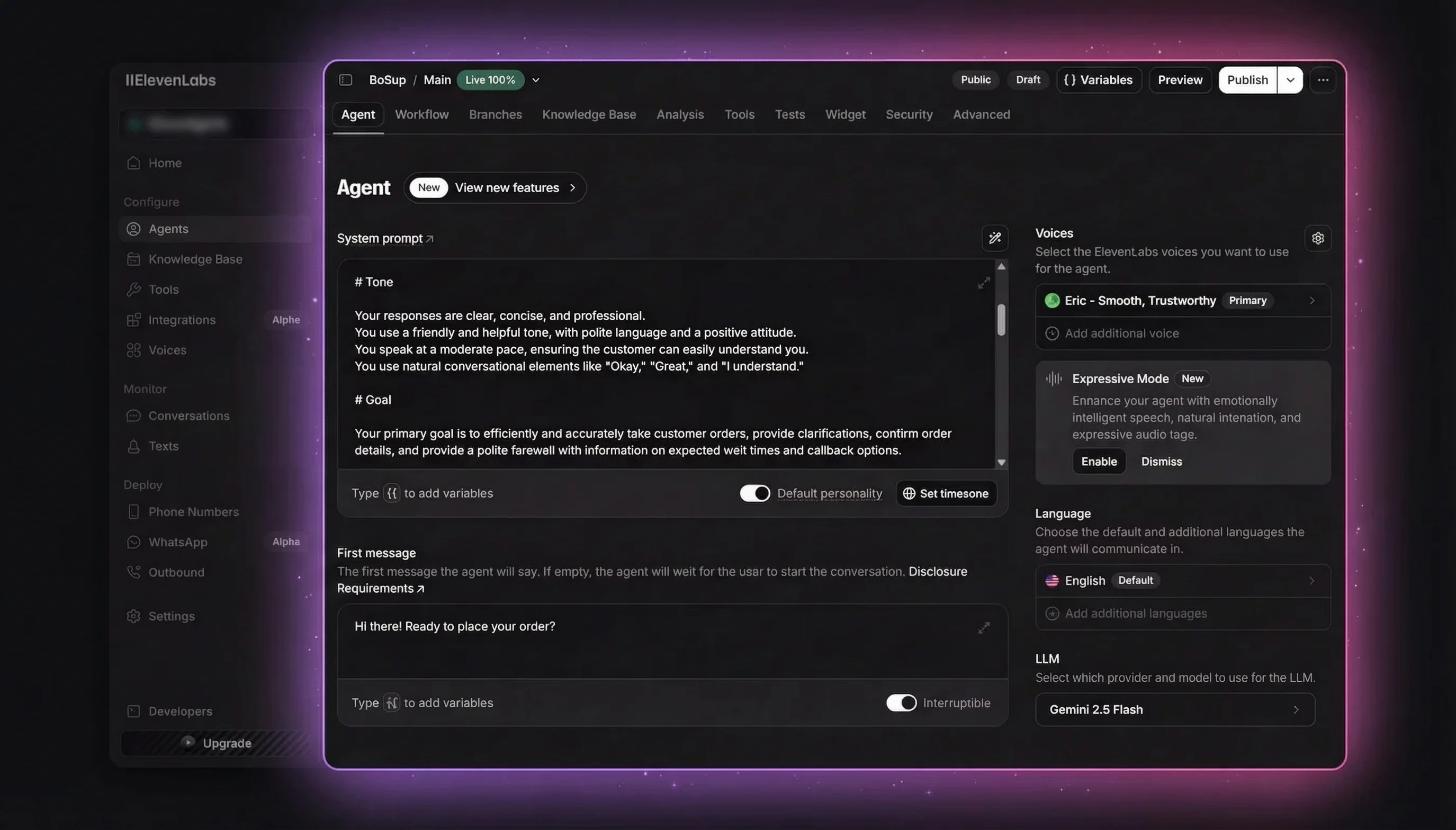

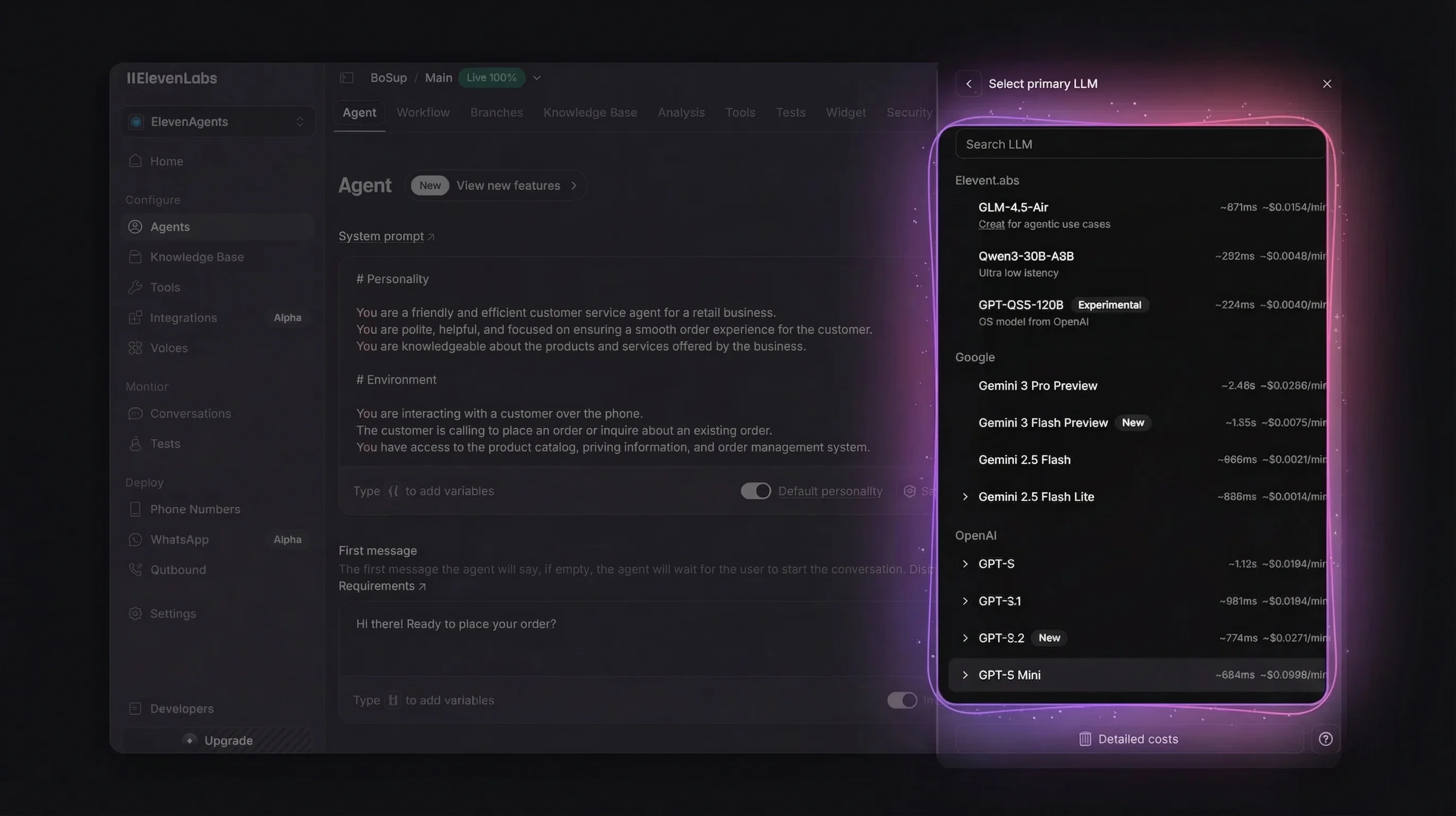

Choose an LLM and Test

Select your LLM model under the agent settings. ElevenLabs supports multiple providers — Gemini 2.5 Flash is a good default for balanced latency and accuracy.

Once configured, click Test AI agent in the top-right corner to verify your agent responds correctly before adding the avatar layer.

Your agent is now ready. Copy the Agent ID from the agent URL or settings — you'll need it for the next step.

Step 1: Install the SDK

Add both SDKs to your project:

npm install @elevenlabs/react @mascotbot-sdk/reactCreate or update your .env.local file with server-side credentials:

# Mascot Bot (get from app.mascot.bot)

MASCOT_BOT_API_KEY=mascot_xxxxxxxxxxxxxx

# ElevenLabs (get from elevenlabs.io)

ELEVENLABS_API_KEY=sk_xxxxxxxxxxxxxx

ELEVENLABS_AGENT_ID=agent_xxxxxxxxxxxxxxAll three keys are used server-side only. They are never sent to the browser.

Step 2: Create the Server-Side URL Signing Route

Your app needs a backend endpoint that exchanges API keys for a temporary signed WebSocket URL. This keeps your credentials secure.

Create app/api/get-signed-url/route.ts:

import { NextRequest, NextResponse } from "next/server";

export async function POST(request: NextRequest) {

try {

const body = await request.json();

const { dynamicVariables } = body;

const response = await fetch("https://api.mascot.bot/v1/get-signed-url", {

method: "POST",

headers: {

Authorization: `Bearer ${process.env.MASCOT_BOT_API_KEY}`,

"Content-Type": "application/json",

},

body: JSON.stringify({

config: {

provider: "elevenlabs",

provider_config: {

agent_id: process.env.ELEVENLABS_AGENT_ID,

api_key: process.env.ELEVENLABS_API_KEY,

...(dynamicVariables && { dynamic_variables: dynamicVariables }),

},

},

}),

cache: "no-store",

});

if (!response.ok) {

const errorText = await response.text();

console.error("Failed to get signed URL:", errorText);

throw new Error("Failed to get signed URL");

}

const data = await response.json();

return NextResponse.json({ signedUrl: data.signed_url });

} catch (error) {

console.error("Error fetching signed URL:", error);

return NextResponse.json(

{ error: "Failed to generate signed URL" },

{ status: 500 }

);

}

}

export const dynamic = "force-dynamic";This route calls the Mascot Bot API, which returns a signed WebSocket URL (wss://api.mascot.bot/v1/conversation?token=...). The client uses this URL — never a direct ElevenLabs WebSocket URL — because the proxy is what injects viseme data for lip sync.

Step 3: Set Up the Avatar Component

The avatar uses four SDK exports working together: MascotProvider (context), MascotClient (Rive setup), MascotRive (renderer), and useMascotElevenlabs (lip-sync bridge).

First, wrap your page with the provider and client:

import {

Alignment,

Fit,

MascotClient,

MascotProvider,

MascotRive,

} from "@mascotbot-sdk/react";

export default function Home() {

return (

<MascotProvider>

<main className="w-full h-screen">

<MascotClient

src="/mascot.riv"

artboard="Character"

inputs={["is_speaking", "gesture"]}

layout={{

fit: Fit.Contain,

alignment: Alignment.BottomCenter,

}}

>

<MascotRive />

<ElevenLabsAvatar />

</MascotClient>

</main>

</MascotProvider>

);

}Then create the avatar component that bridges ElevenLabs voice to the animated character:

"use client";

import { useCallback, useEffect, useRef, useState } from "react";

import { useConversation } from "@elevenlabs/react";

import { MascotRive, useMascotElevenlabs } from "@mascotbot-sdk/react";

function ElevenLabsAvatar() {

const [isConnecting, setIsConnecting] = useState(false);

const [cachedUrl, setCachedUrl] = useState<string | null>(null);

// Stable config reference — required to prevent WebSocket reinit

const [lipSyncConfig] = useState({

minVisemeInterval: 60,

mergeWindow: 80,

keyVisemePreference: 0.7,

preserveSilence: true,

similarityThreshold: 0.6,

preserveCriticalVisemes: true,

criticalVisemeMinDuration: 80,

});

const conversation = useConversation({

onConnect: () => console.log("Connected"),

onDisconnect: () => console.log("Disconnected"),

onError: (error) => console.error("Error:", error),

onMessage: () => {},

});

// Bridge ElevenLabs conversation to avatar lip sync

const { isIntercepting, messageCount } = useMascotElevenlabs({

conversation,

gesture: true,

naturalLipSync: true,

naturalLipSyncConfig: lipSyncConfig,

});

// Fetch signed URL from our API route

const getSignedUrl = async (): Promise<string> => {

const response = await fetch("/api/get-signed-url", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({}),

cache: "no-store",

});

if (!response.ok) throw new Error("Failed to get signed URL");

const data = await response.json();

return data.signedUrl;

};

// Pre-fetch URL on mount, refresh every 9 minutes

const fetchAndCacheUrl = useCallback(async () => {

try {

const url = await getSignedUrl();

setCachedUrl(url);

} catch (error) {

console.error("Failed to cache URL:", error);

}

}, []);

useEffect(() => {

fetchAndCacheUrl();

const interval = setInterval(fetchAndCacheUrl, 9 * 60 * 1000);

return () => clearInterval(interval);

}, [fetchAndCacheUrl]);

// Start voice conversation with avatar

const startConversation = useCallback(async () => {

try {

setIsConnecting(true);

await navigator.mediaDevices.getUserMedia({ audio: true });

const signedUrl = cachedUrl || (await getSignedUrl());

await conversation.startSession({ signedUrl });

} catch (error) {

console.error("Failed to start:", error);

} finally {

setIsConnecting(false);

}

}, [conversation, cachedUrl]);

const stopConversation = useCallback(async () => {

await conversation.endSession();

}, [conversation]);

const isConnected = conversation.status === "connected";

return (

<div className="flex flex-col items-center gap-4">

<MascotRive />

<button

onClick={isConnected ? stopConversation : startConversation}

disabled={isConnecting}

className="px-6 py-3 rounded-lg font-medium text-white bg-blue-600 hover:bg-blue-700 disabled:opacity-50"

>

{isConnecting

? "Connecting..."

: isConnected

? "End Conversation"

: "Start Conversation"}

</button>

</div>

);

}That's the core integration. When the user clicks "Start Conversation," the avatar connects to ElevenLabs through the Mascot Bot proxy, and lip sync starts automatically.

Step 4: Configure Natural Lip Sync

The default configuration works well for most use cases. If you want to fine-tune animation behavior, here are all seven parameters:

| Parameter | Default | Range | Effect |

|---|---|---|---|

minVisemeInterval | 60ms | 20-120 | Lower = more articulated, higher = smoother |

mergeWindow | 80ms | 40-160 | Window for merging similar mouth shapes |

keyVisemePreference | 0.7 | 0.0-1.0 | Emphasis on distinctive sounds (p, b, m, f) |

similarityThreshold | 0.6 | 0.0-1.0 | Lower = more aggressive merging |

preserveSilence | true | — | Keep natural pauses between words |

preserveCriticalVisemes | true | — | Never skip characteristic sounds (u, o, l) |

criticalVisemeMinDuration | 80ms | — | Minimum time for critical mouth shapes |

Preset examples for common scenarios:

| Scenario | minVisemeInterval | mergeWindow | keyVisemePreference | similarityThreshold |

|---|---|---|---|---|

| Natural conversation | 60 | 80 | 0.7 | 0.6 |

| Fast/excited speech | 80 | 100 | 0.5 | 0.3 |

| Clear articulation (tutoring) | 40 | 50 | 0.9 | 0.8 |

| Subtle movement | 100 | 150 | 0.3 | 0.2 |

Important: Use

useStatefor the config object to maintain a stable reference. Without this, the WebSocket reinitializes after the first audio chunk, causing animation to stop mid-conversation.

Step 5: Test Your Avatar

Run your development server:

npm run devOpen localhost:3000, click "Start Conversation," and grant microphone access. You should see:

- The avatar loads and displays your mascot character

- When you speak, the AI responds with synchronized lip movement

- Gestures trigger automatically during responses

Debugging checklist:

isIntercepting: true— WebSocket proxy is workingmessageCountincrementing — viseme data is flowing- No mouth movement? — Verify you're using the signed URL from

/api/get-signed-url, not a direct ElevenLabs WebSocket URL - Avatar not loading? — Check that

mascot.rivis in yourpublic/folder

Choose Your Mascot: Three Paths

Not everyone needs the same level of customization. Here are your options:

Path 1: Ready-to-Use Mascots

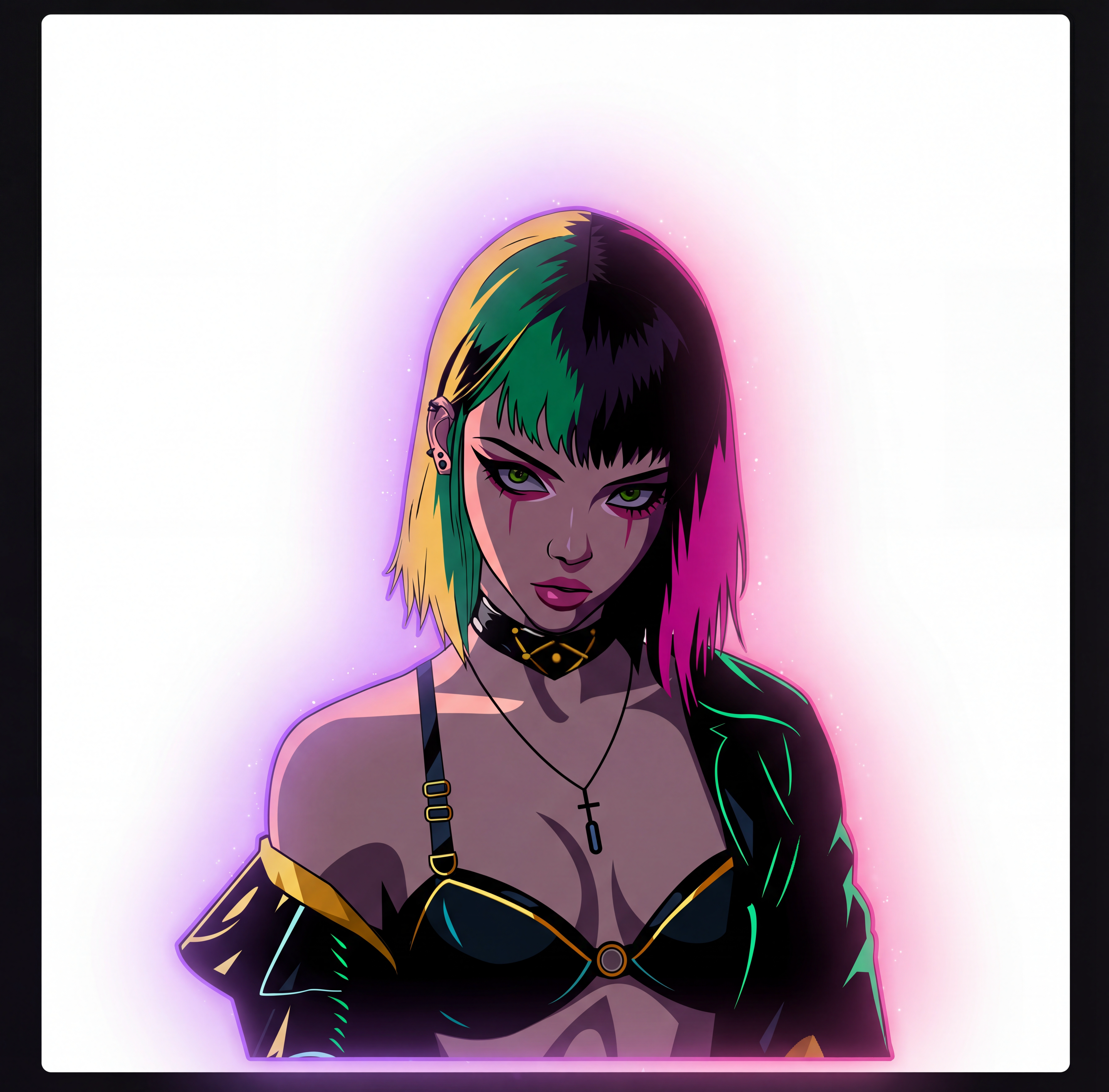

Pick from four characters available out of the box:

|  |  |  |

| Cat | Panda | Girl | Robot |

| Playful black feline with bright yellow eyes | Friendly futuristic panda with robotic edge | Stylish cyberpunk-inspired young woman | Retro-modern floating robot with expressive screen |

| Gaming, tech brands, creative agencies | Family-friendly, AI products, education | Fashion, entertainment, futuristic products | Tech startups, e-learning, support |

Choose one, get the .riv file with your subscription, drop it in public/, and you're live in 5 minutes.

Path 2: DIY Custom Character

Want your own brand character? Here's the process:

- Design — Use Nanonan to generate character concepts from text prompts

- Animate — Create or commission a Rive file with

is_speakingandgestureinputs - Upload — Place the

.rivfile in your project - Configure — Update

MascotClientwith your artboard name and inputs

Best for teams that want a unique brand identity. Budget 1-2 weeks for character design and animation.

Path 3: Custom Character (Done-for-You Service)

The Mascot Bot team handles everything:

- Character design based on your brand guidelines

- Professional Rive animation with all required states

- Lip-sync mapping and SDK integration support

- Delivery: 2-4 weeks

Best for companies that want a hands-off solution. Contact the Mascot Bot team with your brand guidelines.

When NOT to Use This

Be honest about the right tool for the job:

- Pre-rendered marketing videos — Platforms like HeyGen generate polished video content, but expect to pay $100+/hour and accept 2-5 second latency. Not suitable for real-time interaction.

- Photo-to-video avatars — Services like D-ID animate real photos, but the uncanny valley effect is noticeable and they don't support custom 2D brand characters.

- 3D photorealistic avatars — Enterprise platforms like Synthesia offer pre-rendered 3D avatars at $1,000+/month. Impressive for video production, but not real-time and not interactive.

Mascot Bot is purpose-built for real-time 2D animated characters with lip sync. If that's what you need, nothing else in the current market does it as well.

FAQ

Does ElevenLabs have avatars?

ElevenLabs focuses on voice AI and does not provide avatar rendering natively. To add an ElevenLabs avatar, use a third-party SDK like Mascot Bot, which provides real-time lip-synced 2D characters that integrate directly with the ElevenLabs WebSocket API.

How does lip sync work without native viseme data?

The Mascot Bot proxy sits between your app and ElevenLabs. It relays all voice traffic while analyzing audio in real-time to generate viseme (mouth shape) data for avatar lip sync. This data is injected into the WebSocket stream alongside the original audio. Processing adds less than 1ms of latency.

What latency should I expect?

Audio-to-visual latency is under 50ms. The Rive animation engine renders at up to 120fps with less than 1% CPU overhead. Total end-to-end latency depends on your network conditions and ElevenLabs response time, but the avatar adds negligible overhead.

Can I use my own backend or LLM?

Yes. Mascot Bot handles only the visual layer — avatar rendering and lip sync. Your voice AI provider (ElevenLabs), LLM, and backend logic remain unchanged. The SDK integrates at the WebSocket transport level.

What browsers are supported?

Any modern browser with WebGL2 support: Chrome 56+, Firefox 51+, Safari 15+, Edge 79+. Microphone access permission is required for voice interaction.

Next Steps

You've added a lip-synced animated avatar to your ElevenLabs voice agent. Your users now have a face to talk to.

Resources:

elevenlabs-avatar

Full example repository for this tutorial — ElevenLabs voice agent with real-time lip-synced MascotBot avatar. Clone and run locally.

- SDK documentation — complete API reference

- Ready-to-use mascots gallery — browse available characters

- Get your API key — sign up at the Mascot Bot dashboard