Every time a user navigates to a new page, the voice agent dies. The session terminates, the conversation context is gone, and the user has to start over. If you've shipped a chat or voice ai chatbot inside a multi-page React app, you know the bug.

Our chatbot is 98% done. The backend works perfectly. But the user is mid-question when they click a link, and the whole thing resets.

This tutorial walks the exact pattern we shipped for that team — a persistent voice AI agent in React with ElevenLabs Conversational AI that survives navigation, fills forms by voice, and acknowledges manual edits inside the same conversation. Every code block is real, pulled from the live template at react-website-demo-mascotbot.vercel.app and the public repo at github.com/mascotbot-templates/react-website-demo.

A voice AI agent is a real-time conversational interface where users speak instead of type, and the assistant calls registered tool functions to drive UI actions. In React, the production pattern uses @elevenlabs/react's useConversation hook plus a persistent provider so the WebRTC session survives page navigation.

You'll cover, in order: the persistent widget pattern, three real client tools, voice-to-form sync, an iteration loop that lets you test without a microphone, and the eight production pitfalls we hit so you don't have to.

The Problem — "My Chatbot Dies on Every Page Reload"

In legacy stacks, full-page reloads tear down the WebSocket or WebRTC session that powers your assistant. In a React app without a persistent provider tree, App Router navigation does the same thing — the widget unmounts, the session closes, the conversation context vanishes. The user has to re-authorise the mic, re-explain themselves, and repeat the question they were halfway through.

Existing tutorials don't solve this:

- LiveKit's React quickstart and getstream.io's tutorial are SPA-shaped from the start — they never address what happens when you navigate to a NEW route.

- The ElevenLabs React SDK reference covers the

useConversationhook but doesn't show the full provider hierarchy that keeps it mounted across routes. - Even the freshest vendor announcements ship the hook without the persistence pattern.

The fix is one structural decision: mount the voice widget OUTSIDE {children} in your root layout, wrapped in a client-component provider that owns the conversation session. Everything else in this tutorial — the tools, the form sync, the deploy notes — assumes that decision is in place.

What You'll Build

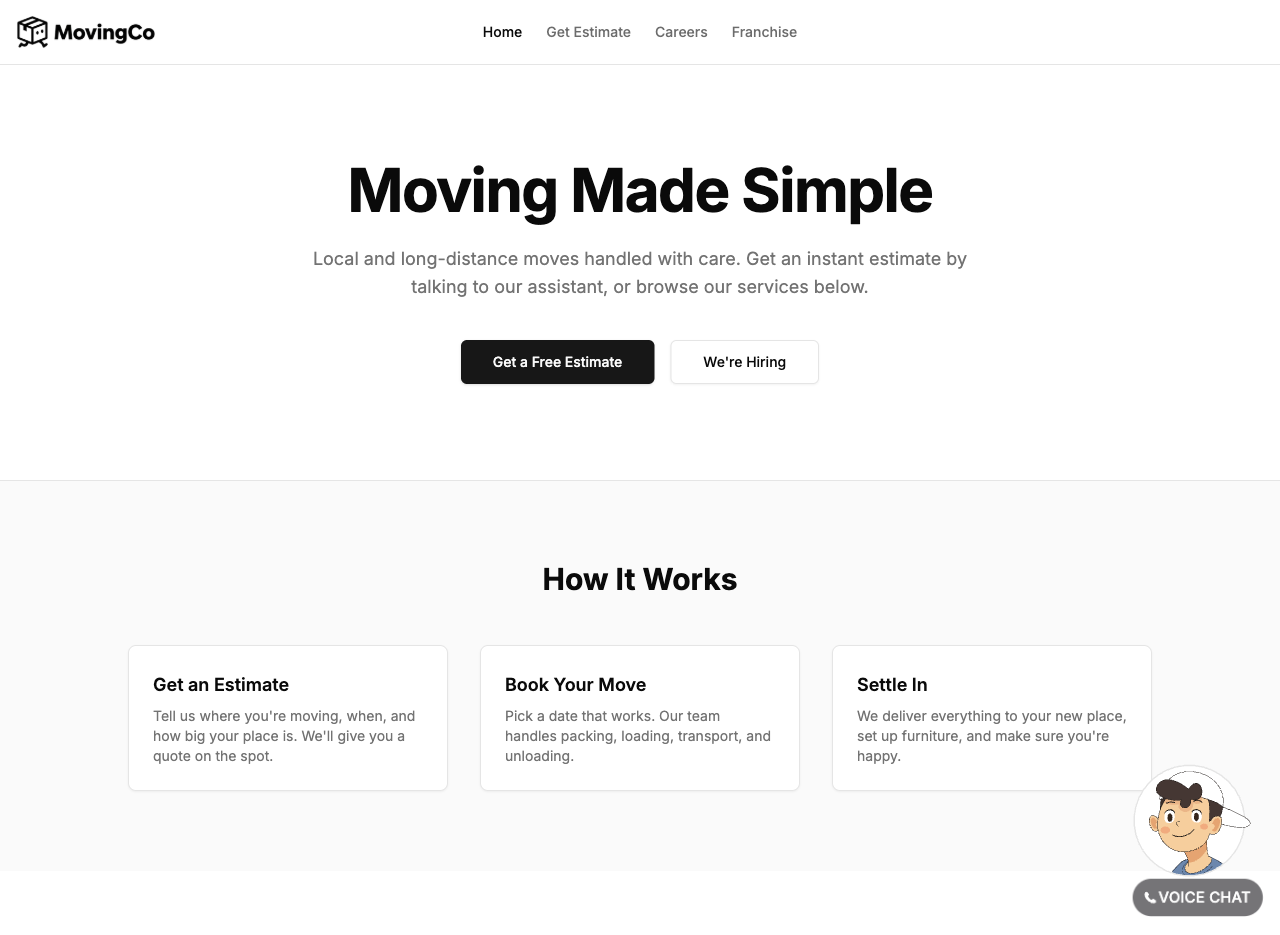

A Next.js 16 app with three routes (/, /estimate, /careers, /franchise), one always-mounted voice widget, three client tools, and live form autofill on the estimate page.

The example brand is MovingCo — a fictional moving-services company we built as a reference domain. Swap the copy and form fields to match your business without touching the agent plumbing.

Three flows:

- Moving estimate (primary) — voice fills 8 fields and submits the form

- Careers (secondary) — voice navigates to job listings and stays out of the way

- Franchise (tertiary) — voice navigates and answers brief questions

By the end of this guide, you'll have:

- A voice agent that stays connected across all routes (zero session drops)

- "I need an estimate" → automatic navigation to

/estimate - 8 form fields that fill in real time as the user speaks

- Manual overrides the agent acknowledges within ~1.5 seconds

- One CLI command to spin up the entire ElevenLabs agent + 3 tools

I just want to try it. Show me the fastest path to a working demo.

Try the live version before you build it: react-website-demo-mascotbot.vercel.app. Give it microphone access and say "I need an estimate from Brooklyn to Boston, July 15th, two-bedroom apartment." — watch the form fill itself.

mascotbot-templates/react-website-demo

Next.js 16 template with a persistent ElevenLabs voice widget, three client tools (navigateTo, updateEstimateField, submitEstimate) wired to a React Context reducer + Router, and bidirectional voice↔form sync. Production-ready — clone, set three env vars, deploy.

Time required: 30–45 minutes assuming accounts already exist.

Prerequisites

- Node.js 18.17+ and pnpm

- A free ElevenLabs account (elevenlabs.io) — you'll need an API key

- A MascotBot account (app.mascot.bot) for the animated avatar; the SDK ships as a

.tgzfrom the dashboard, not via npm (intentional distribution policy — see below) - A microphone

- Comfort with: Next.js App Router, React 19 hooks, TypeScript

Step 1 — Clone the Template & Install Private Files

This is the fastest path to a runnable repo. Clone, drop in two private files from your MascotBot dashboard, install.

git clone https://github.com/mascotbot-templates/react-website-demo.git

cd react-website-demoTwo private files come from the MascotBot dashboard rather than npm:

# MascotBot SDK — download from your Mascot Bot dashboard

cp /path/to/mascotbot-sdk-react-0.1.9.tgz ./

# Rive animation file — download from your Mascot Bot dashboard

cp /path/to/mascot_widget.riv ./public/Then:

pnpm installThe .tgz is referenced in package.json as "@mascotbot-sdk/react": "file:./mascotbot-sdk-react-0.1.9.tgz". We ship the SDK this way so we can rev versions for enterprise customers without npm-publish friction. The .riv is the Widget-type artboard the avatar canvas loads — for the deeper SDK install path, see the MascotBot SDK quick-start walkthrough.

pnpm dev will boot the app on localhost:3000. The widget appears in the bottom right — but it can't connect yet. That's the next step.

Step 2 — Spin Up the ElevenLabs Agent in One Command

The template ships a scripts/setup-agent.mjs so you don't have to click through the ElevenLabs Conversational AI dashboard manually. One command creates the agent, registers the three client tools, and prints the new agent ID:

ELEVENLABS_API_KEY=sk_your_key node scripts/setup-agent.mjsWhat that script does, in one sentence each: it creates the three tools (navigateTo, updateEstimateField, submitEstimate) from the JSON files in tool_configs/, links them to a new agent based on agent_configs/Website-Demo-Assistant.json, and prints ELEVENLABS_AGENT_ID=agent_xxx for you to paste into .env.local:

MASCOT_BOT_API_KEY=your_mascotbot_key

ELEVENLABS_API_KEY=sk_your_elevenlabs_key

ELEVENLABS_AGENT_ID=agent_xxxThe agent itself is intentionally small. The system prompt enforces five rules: ALWAYS call tools (don't describe actions), one navigation per topic change, demo-awareness ("you don't have real company knowledge"), MAX 2 sentences per response, and a hard "STOP after submitEstimate" boundary. The LLM is gpt-5.2 at temperature 0.3; the TTS model is eleven_v3_conversational.

Why GPT-5.2? In our testing for this use case, our first attempt used Gemini 2.5 Flash and the agent described actions instead of calling tools — it would say "I'll fill in your name now" without firing updateEstimateField. Switching to GPT-5.2 made tool-calling reliable. Your mileage may vary per domain; if you change models, run the iteration loop in Step 6 against your test_configs first.

Prefer to build the agent in the dashboard? ElevenLabs' own "Building your first conversational AI agent" walkthrough (April 7, 2026) covers that path.

Step 3 — Wire useConversation and the Signed-URL Flow

The whole React integration is a single hook plus a server-side proxy. Here's the hook, from src/components/widget.tsx:

import { useConversation } from "@elevenlabs/react";

const conversation = useConversation({

clientTools: {

// see Step 4 for the full 3-tool map

navigateTo: (params) => { /* ... */ },

updateEstimateField: (params) => { /* ... */ },

submitEstimate: async () => { /* ... */ },

},

onConnect: () => {

onCallStateChange("connected");

if (customInputs?.inCall) customInputs.inCall.value = true;

},

onDisconnect: () => {

onCallStateChange("idle");

if (customInputs?.inCall) customInputs.inCall.value = false;

},

onError: (error) => {

console.error("[Widget] Error:", error);

onCallStateChange("idle");

},

});clientTools is where your code meets the agent. onConnect/onDisconnect flip a boolean on the Rive avatar so its body language matches the WebSocket state.

Your API key never reaches the browser. ElevenLabs requires a signed-URL handshake; in App Router, a route.ts POST handler is the right place. Here's src/app/api/get-signed-url/route.ts in full:

import { NextRequest, NextResponse } from "next/server";

export async function POST(request: NextRequest) {

try {

const body = await request.json();

const { dynamicVariables } = body;

// Use Mascot Bot proxy endpoint for automatic viseme injection

const response = await fetch("https://api.mascot.bot/v1/get-signed-url", {

method: "POST",

headers: {

Authorization: `Bearer ${process.env.MASCOT_BOT_API_KEY}`,

"Content-Type": "application/json",

},

body: JSON.stringify({

config: {

provider: "elevenlabs",

provider_config: {

agent_id: process.env.ELEVENLABS_AGENT_ID,

api_key: process.env.ELEVENLABS_API_KEY,

...(dynamicVariables && { dynamic_variables: dynamicVariables }),

},

},

}),

cache: "no-store",

});

if (!response.ok) throw new Error("Failed to get signed URL");

const data = await response.json();

return NextResponse.json({ signedUrl: data.signed_url });

} catch (error) {

console.error("Error fetching signed URL:", error);

return NextResponse.json({ error: "Failed to generate signed URL" }, { status: 500 });

}

}

export const dynamic = "force-dynamic";Two anti-cache lines (cache: "no-store" on the fetch + export const dynamic = "force-dynamic") keep Vercel's edge from serving the same signed URL to two clients. Without them, the second startSession will 401 — we hit this in production.

We proxy through MascotBot rather than calling ElevenLabs directly so visemes are auto-injected into the audio stream, which is what lets the Rive avatar lip-sync without any client-side audio analysis. If you don't need the avatar layer, skip the proxy and call ElevenLabs' /v1/convai/conversation/get-signed-url REST endpoint directly — same shape.

The widget caches the URL and refreshes it well before expiry. ElevenLabs' published docs don't pin an exact TTL for signed URLs in the React SDK, so we refresh defensively every 9 minutes (the template's setInterval(..., 9 * 60 * 1000)) — short enough that an expired URL never reaches startSession. On the call-start path, we parallelize the mic prompt and the URL fetch with Promise.all to save 100–400 ms on first load.

WebRTC vs WebSocket — quick sidebar

WebRTC is the default in @elevenlabs/react, delivers under 300 ms end-to-end latency, and handles jitter buffering. WebSocket is simpler for server-to-server transcript streaming but adds 100–200 ms from TCP head-of-line blocking. For a browser voice UI, choose WebRTC; for programmatic server-to-server transcripts, WebSocket is acceptable.

The official docs put it plainly: "Voice conversations use WebRTC and text-only conversations use WebSocket by default." ElevenLabs' WebRTC migration announcement (Angelo Giacco, July 2025) frames this as best-in-class echo cancellation and background noise removal "battle-tested across billions of video calls." For latency math, ElevenLabs' Flash model adds about 75 ms inference; network round-trip contributes another 20–200 ms depending on geography.

If sub-500 ms latency is a hard requirement — WebRTC is what gets you there. ElevenLabs' latency concepts doc has the full math.

If you want voice + avatar in one walkthrough, the ElevenLabs avatar setup walkthrough is the next-shorter article.

Step 4 — Wire Three Real Client Tools to React Context

This is the section nobody else has. Most tutorials show one toy get_weather tool. A real product needs three tools that share state — one to navigate, one to update a form field, one to submit. Here's how they wire to a useReducer Context plus the Next.js Router.

ElevenLabs client tools are typed callbacks the agent invokes during a conversation. The agent calls them by name with JSON arguments; your handler runs in the browser and dispatches React state changes. The MovingCo demo registers three: navigateTo, updateEstimateField, submitEstimate — all fire-and-forget with response_timeout_secs: 1.

Here's the clientTools map from widget.tsx:

clientTools: {

navigateTo: (params: { page: string }) => {

console.log("[Widget] navigateTo:", params.page);

dispatch({ type: "NAVIGATE_TO", page: params.page });

},

updateEstimateField: (params: { field: string; value: string }) => {

const validFields: (keyof EstimateFormData)[] = [

"name", "email", "phone", "origin", "destination",

"moveDate", "homeSize", "specialItems"

];

if (validFields.includes(params.field as keyof EstimateFormData)) {

dispatch({

type: "UPDATE_ESTIMATE_FIELD",

field: params.field as keyof EstimateFormData,

value: params.value,

source: "agent",

});

}

},

submitEstimate: async () => {

dispatch({ type: "SUBMIT_ESTIMATE" });

return "Estimate submitted successfully! The user can see a confirmation on their screen.";

},

},Three things matter here. updateEstimateField validates against an ALLOWED_FIELDS list — agent hallucinations get dropped. submitEstimate is the one tool that returns a string, because the agent's next utterance incorporates the confirmation (expects_response: true, response_timeout_secs: 5). The other two are pure dispatchers.

The shared state lives in src/lib/demo-context.tsx. The crucial detail is the source discriminator on the field-update action:

type DemoAction =

| { type: "UPDATE_ESTIMATE_FIELD"; field: keyof EstimateFormData; value: string; source: "agent" | "user" }

| { type: "SUBMIT_ESTIMATE" }

| { type: "RESET_ESTIMATE" }

| { type: "NAVIGATE_TO"; page: string }

| { type: "CLEAR_NAVIGATION" };source: "agent" | "user" is the seed for bidirectional sync (Step 5). Without it, a user typing into a field would look identical to the agent setting it, and the form would never know which side of the conversation owns the value.

Navigation gets its own 12-line bridge — NavigationHandler watches state.navigationTarget and calls router.push():

"use client";

import { useEffect } from "react";

import { useRouter } from "next/navigation";

import { useDemo } from "@/lib/demo-context";

export function NavigationHandler() {

const router = useRouter();

const { state, dispatch } = useDemo();

useEffect(() => {

if (state.navigationTarget) {

router.push(state.navigationTarget);

dispatch({ type: "CLEAR_NAVIGATION" });

}

}, [state.navigationTarget, router, dispatch]);

return null;

}Twelve lines, zero JSX, no router import in the widget. Lives inside the provider tree, so it both has router access and survives every route change.

Fire-and-forget timeout pitfall. All three tools have expects_response: false, response_timeout_secs: 1 (or true, 5 for submit). The default response_timeout_secs is 30 — leave it on the default with expects_response: true and a slow handler will hang the entire conversation. This is the most common production gotcha; we hit it in our testing and now treat fire-and-forget as the default.

The Persistent-Widget Pattern (the page-reload fix)

A persistent voice widget mounts the ElevenLabs conversation session inside a client-component provider that lives OUTSIDE {children} in your root layout. Because the widget never unmounts during route changes, the WebRTC session survives navigation. In Next.js App Router, this means a Providers boundary above {children} with the widget rendered as a sibling.

The entire pattern fits in 21 lines (src/components/providers.tsx):

"use client";

import dynamic from "next/dynamic";

import { DemoProvider } from "@/lib/demo-context";

import { NavigationHandler } from "@/components/navigation-handler";

const PersistentWidget = dynamic(

() => import("@/components/widget").then((m) => ({ default: m.PersistentWidget })),

{ ssr: false }

);

export function Providers({ children }: { children: React.ReactNode }) {

return (

<DemoProvider>

<NavigationHandler />

{children}

<PersistentWidget />

</DemoProvider>

);

}{children} re-renders on every route change; <PersistentWidget /> doesn't. The useConversation hook inside the widget retains its WebSocket session through every navigation. That's the whole feature.

Two details make it work. First, the root layout.tsx is a Server Component wrapping this Client <Providers> — the canonical App Router pattern. Second, dynamic(..., { ssr: false }) is non-negotiable for the widget: Rive runs on a WebGL2 canvas, which has no SSR equivalent. Skipping { ssr: false } produces a hydration mismatch + a console error on first load; it also defers loading the ~180 KB Rive runtime until the widget actually mounts.

Common mistake to avoid: putting <PersistentWidget /> inside a route-level page.tsx. Result: every route change tears it down. The widget MUST be a sibling of {children} in the root provider tree — not a child of any single route.

If you've been on @elevenlabs/react for a while, note that v1.0.0 introduced ConversationProvider as a required ancestor for all conversation hooks — the release notes cover the migration. The template pins ^0.5.0 and the pattern above still works there; if you're starting fresh, pull the latest and wrap accordingly.

Step 5 — Bidirectional Form Sync with updateEstimateField + sendContextualUpdate

Voice fills the form. The user types over a field. The agent re-asks. The illusion breaks. This is the most common voice-UI bug in production — and the fix is one underused API.

sendContextualUpdate lets your React UI inject context into a live ElevenLabs conversation without speaking it aloud. The agent receives the message as background information and adjusts its next response. Use it to notify the agent when a user manually edits a field the agent was about to fill — debounce 1.5 seconds to avoid thrashing.

Here's the inline pattern that ships in src/app/estimate/page.tsx:

function EstimateField({ field, label, placeholder, value, onChange, onUserFinishedEditing }) {

const debounceRef = useRef<NodeJS.Timeout | null>(null);

const isUserTypingRef = useRef(false);

const lastAgentValueRef = useRef(value);

// Track agent-driven value changes (not user typing)

useEffect(() => {

if (!isUserTypingRef.current) lastAgentValueRef.current = value;

}, [value]);

const handleChange = (newValue: string) => {

isUserTypingRef.current = true;

onChange(newValue);

if (debounceRef.current) clearTimeout(debounceRef.current);

debounceRef.current = setTimeout(() => {

isUserTypingRef.current = false;

if (newValue.trim() && newValue !== lastAgentValueRef.current) {

onUserFinishedEditing(field, newValue);

lastAgentValueRef.current = newValue;

}

}, 1500);

};

return <input value={value} onChange={(e) => handleChange(e.target.value)} /* ...tw classes */ />;

}

// Page-level wiring

const { state, dispatch, sendContextualUpdate } = useDemo();

const handleUserFinishedEditing = useCallback(

(field, value) =>

sendContextualUpdate(

`User manually typed "${value}" in the ${FIELD_LABELS[field]} field. Acknowledge briefly and move on to the next empty field.`

),

[sendContextualUpdate]

);

isUserTypingRef is set synchronously inside handleChange — before any reducer update propagates — so the post-render useEffect(() => { lastAgentValueRef.current = value }) doesn't corrupt the snapshot during user edits. lastAgentValueRef is the guard against re-emitting sendContextualUpdate when the agent just filled the field.

The agent receives that contextual update as context, not speech, and replies with something like "Got it, moving on" — no awkward re-asking. The 1.5 s debounce is a UX calibration: shorter than 1 s fires mid-word; longer than 2.5 s feels unresponsive. Empirical, not theoretical.

The mental model the user feels: voice and keyboard are first-class equal inputs. Speak the first five fields, type the last three, and the agent adapts.

The template also ships a reusable useFormFieldSync hook in demo-context.tsx with the same logic — extract that for any second form. The estimate page uses the inline component because the page-level wiring needed access to lastAgentValueRef for one specific guard.

The same primitive handles "user navigated to /careers via header click" — sendContextualUpdate tells the agent the route changed without making it announce the change.

Step 6 — The Iteration Loop: simulate-conversation API + ElevenLabs CLI test_configs

Voice-testing every prompt change is brutal — headphones on, talk, listen, transcribe, repeat 20× a day. ElevenLabs ships a simulate-conversation API and a CLI that read JSON test configs. Neither is well documented in any tutorial; both should be the default.

Use ElevenLabs' simulate-conversation API: POST to /v1/convai/agents/{id}/simulate-conversation with a simulated_user_config containing first_message and max_turns. You get a transcript back showing which tools the agent called and what it said — no microphone needed. The ElevenLabs CLI automates this with test_configs/*.json.

The 6-step loop:

- Edit the system prompt or tool config

- Simulate a conversation (API call, no voice needed)

- Read the transcript — right tools? brevity? hallucinations?

- If broken → loop. If good → real voice test.

- Check the real-voice transcript for missed issues.

- Write a test_config for whatever broke → loop forever

The CLI is the easiest entry point:

npm install -g @elevenlabs/cli

elevenlabs auth login

# Pull your agent config to local files

elevenlabs agents pull --agent YOUR_AGENT_ID

# Edit the config — system prompt, LLM, voice, tools all in agent_configs/*.json

code agent_configs/Website-Demo-Assistant.json

# Push changes back

elevenlabs agents push

Pull → edit → push. Track agent prompts in git, review them in PRs, roll back when something breaks. Critical caveat: when PATCHing tools, always include the FULL parameters schema. We hit a bug in production where a partial PATCH wiped parameters to null and our next conversation got {} arguments — see Lessons Learned below.

The simulate API itself is one curl:

curl -X POST "https://api.elevenlabs.io/v1/convai/agents/YOUR_AGENT_ID/simulate-conversation" \

-H "xi-api-key: $ELEVENLABS_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"simulation_specification": {

"simulated_user_config": {

"first_message": "I need a moving estimate",

"language": "en"

},

"max_turns": 4

}

}'The template ships three test_configs: Navigate-to-estimate-on-request.json, Fill-fields-when-user-gives-details.json, and Navigate-and-fill-on-first-message.json. Each pins a specific behavior we want to regress against — the canonical first-message phrase that should fire navigateTo("/estimate"), the explicit-detail message that should fire updateEstimateField for each field, and the everything-upfront edge case. We caught five of the eight production bugs in the next section using this loop before they hit a live voice test.

Step 7 — Deploy to Vercel

vercel link

vercel deploy --prodOne non-obvious gotcha you'll hit on first deploy. When you add an env var with echo, the shell appends a trailing newline that silently breaks the WebSocket handshake (the signed-URL request looks fine; the handshake fails with WS close 1006). We hit this in production. Use printf instead:

printf "%s" "$MASCOT_BOT_API_KEY" | vercel env add MASCOT_BOT_API_KEY production

printf "%s" "$ELEVENLABS_API_KEY" | vercel env add ELEVENLABS_API_KEY production

printf "%s" "$ELEVENLABS_AGENT_ID" | vercel env add ELEVENLABS_AGENT_ID production

vercel deploy --prodThe live demo at react-website-demo-mascotbot.vercel.app is the proof: clone the repo, follow the steps, deploy the same shape. Microphone permission is HTTPS-only in production — Vercel handles that by default.

Lessons Learned (Production Pitfalls We Hit)

Each row is a real bug from our build. Steal the fix; skip the bug.

| What Went Wrong | Root Cause | How We Fixed It |

|---|---|---|

| Agent described actions instead of calling tools | Gemini 2.5 Flash didn't reliably invoke tools (in our testing for this use case) | Switched LLM to GPT-5.2 |

Tool calls arrived with {} arguments | PATCH to update an agent wiped parameters to null | Always send the FULL parameters schema on every PATCH |

| Agent role-played a recruiter on the careers page | Prompt didn't bound static-content pages | Added "Do NOT describe specific roles. The page has that info." |

| Agent kept asking about packing/storage after submit | No post-submission boundary | Added "After submitEstimate, STOP. Do NOT ask about extras." |

| Agent navigated to the same page 5 times | No "already on page" awareness | Added "Call navigateTo ONCE when topic changes" |

| Agent gave 10-sentence answers | No brevity constraint | Added "MAX 2 sentences per response. NEVER exceed this." |

| Connection hung forever in production | Vercel env vars had trailing \n — we hit this in production | Use printf instead of echo when piping to vercel env add |

| Agent invented company knowledge | Prompt was too open-ended | Added "This is a DEMO. Do NOT make up information." |

Two of these surprised us most. First, the LLM swap: prompt-engineering effort that worked for one model didn't transfer — the agent's tool-call reliability is at least partly a model-behavior story, not a pure prompt story. Test against the model you'll ship. Second, the trailing-\n bug: Vercel's env-var ingest preserves the newline, the signed-URL request appears to succeed, the WebSocket handshake fails, and there's no useful error in the browser console. printf "%s" is the only clean fix we found.

Customizing the Template for Your Brand

Brand it. Swap "MovingCo" copy in src/app/page.tsx, careers/page.tsx, franchise/page.tsx. Brand color → tailwind.config.js.

Customize the avatar. WIDGET_CUSTOMIZATION in src/components/widget.tsx maps to Rive customInputs:

const WIDGET_CUSTOMIZATION = {

gender: 1, // 1 = male, 2 = female

outline: 10, // 0–100 stroke thickness

colourful: true,

shirt_color: 2,

eyes_type: 2,

hair_style: 3,

// ...accessories_hue, _saturation, _brightness

};For the deeper character workflow, see swap in your own mascot character.

Add a page. Create src/app/your-page/page.tsx → add to navItems in site-header.tsx → add the route to the agent system prompt's PAGES list → update navigateTo's tool description.

Add a form field. Add it to EstimateFormData in demo-context.tsx → add to formFields in estimate/page.tsx → update updateEstimateField's tool description → update the agent system prompt's FIELDS list.

The same shape ports cleanly to other verticals — real estate (showing-estimate intake), dental (appointment booking), restaurants (reservation + dietary form), insurance (quote intake). The MovingCo demo is the example domain; the pattern is the asset.

Next Steps

Now that you have a persistent voice widget, three real client tools, and a bidirectional form, here's the natural progression:

- How to Add a Talking Avatar to Your ElevenLabs Voice Agent — same stack, single page, simpler scope. Good if you don't need the multi-route persistence story.

- Avatar SDK Quick Start — the SDK on-ramp if you skipped Step 1's

.tgzinstall path. - Custom Brand Mascot Tutorial — swap in your own character without touching the conversation plumbing.

- MascotBot vs HeyGen comparison — for stakeholders who need a vendor-positioning brief.

Ship the template. Clone it, give it your brand, deploy on Monday. The live version is at react-website-demo-mascotbot.vercel.app — show a teammate before you write a line of code.

mascotbot-templates/react-website-demo

The full template this tutorial walks — Next.js 16, persistent widget, three client tools, bidirectional form sync, iteration loop with simulate-conversation, and the 8 production pitfalls documented in the README.

Frequently Asked Questions

How do you connect ElevenLabs Conversational AI to a React project?

Install @elevenlabs/react, wrap the app in a client-component provider that mounts the useConversation hook, and start the session with a signed URL fetched from a Next.js API route. The hook returns startSession, endSession, and sendContextualUpdate for runtime control.

How do I keep a voice chatbot session alive across page navigation in Next.js?

Mount the conversation widget in your root layout.tsx provider tree as a sibling of {children} — not inside a route's page.tsx. The widget never unmounts during route changes, so the WebRTC session survives navigation and the conversation context is preserved.

How do voice agent client tools work?

Client tools are typed JavaScript callbacks the ElevenLabs agent calls by name during a conversation. You register them in the clientTools option of useConversation; the agent passes JSON arguments, your handler dispatches React state updates, and the UI changes in real time.

How do I make a voice agent fill a form in React?

Register an updateEstimateField({field, value}) client tool that dispatches a reducer action tagging the source as "agent". The form input components read from the same context. To handle manual user edits, debounce 1.5 s and call sendContextualUpdate so the agent acknowledges and continues without re-asking.

How can I test my voice agent without speaking out loud?

Use ElevenLabs' simulate-conversation API or the CLI's agents push flow with test_configs/*.json. POST a simulated_user_config with a first_message and max_turns; you get a transcript back showing exactly which tools the agent called and what it said.

What's the difference between WebRTC and WebSocket for ElevenLabs voice agents?

WebRTC is the default in @elevenlabs/react, delivers under 300 ms latency, and handles jitter buffering. WebSocket is simpler for server-to-server transcript streaming but adds 100–200 ms from TCP head-of-line blocking. For a browser voice UI, choose WebRTC.

How much does ElevenLabs Conversational AI cost?

Pricing scales by conversation minutes; the free tier covers prototyping, paid tiers start in the low double digits per month. Check the canonical pricing page at elevenlabs.io/pricing — pricing changes faster than tutorials do.

ElevenLabs vs LiveKit vs Stream — which is best for a React voice agent?

| Stack | Best For | Tradeoff |

|---|---|---|

ElevenLabs @elevenlabs/react | Voice-only browser UI with best-in-class TTS | No video; vendor-managed infra |

LiveKit agents-react | Custom low-level WebRTC pipelines | More integration code |

| Stream Video React SDK | Voice + video edge network | Vendor lock-in to Stream edge |

For the standalone 2D-vs-photorealistic comparison, see MascotBot vs HeyGen.